TCP/IP: Security: EAP, IPsec, TLS, DNSSEC, and DKIM

There are three primary properties of information, whether in a computer network or not, that may be desirable from an information security point of view: confidentiality, integrity, and availability (the CIA triad) [L01], summarized here:

-

Confidentiality means that information is made known only to its intended users (which could include processing systems).

-

Integrity means that information has not been modified in an unauthorized way before it is delivered.

-

Availability means that information is available when needed.

These are core properties of information, yet there are other properties we may also desire, including authentication, nonrepudiation, and auditability.

-

Authentication means that a particular identified party or principal is not impersonating another principal.

-

Nonrepudiation means that if some action is performed by a principal (e.g., agreeing to the terms of a contract), this fact can be proven later (i.e., cannot successfully be denied).

-

Auditability means that some sort of trustworthy log or accounting describing how information has been used is available.

Such logs are often important for forensic (i.e., legal and prosecuritorial) purposes.

These principles are applicable to information in physical (e.g., printed) form, for which mechanisms such as safes, secured facilities, and guards have been used for thousands of years to enforce controlled sharing, storage, and dissemination. When information is to be moved through an unsecured environment, additional techniques are required.

- 1. Threats to Network Communication

- 2. Basic Cryptography and Security Mechanisms

- 2.1. Cryptosystems

- 2.2. Rivest, Shamir, and Adleman (RSA) Public Key Cryptography

- 2.3. Diffie-Hellman-Merkle Key Agreement (aka Diffie-Hellman or DH)

- 2.4. Signcryption and Elliptic Curve Cryptography (ECC)

- 2.5. Key Derivation and Perfect Forward Secrecy (PFS)

- 2.6. Pseudorandom Numbers, Generators, and Function Families

- 2.7. Nonces and Salt

- 2.8. Cryptographic Hash Functions and Message Digests

- 2.9. Message Authentication Codes (MACs, HMAC, CMAC, and GMAC)

- 2.10. Cryptographic Suites and Cipher Suites

- 3. Certificates, Certificate Authorities (CAs), and PKIs

- 4. TCP/IP Security Protocols and Layering

- 4.1. Network Access Control: 802.1X, 802.1AE, EAP, and PANA

- 4.2. Layer 3 IP Security (IPsec)

- 4.2.1. Internet Key Exchange (IKEv2) Protocol

- 4.2.1.1. IKEv2 Message Formats

- 4.2.1.2. The IKE_SA_INIT Exchange

- 4.2.1.3. Security Association (SA) Payloads and Proposals

- 4.2.1.4. Key Exchange (KE) and Nonce (Ni, Nr) Payloads

- 4.2.1.5. Notification (N) and Configuration (CP) Payloads

- 4.2.1.6. Algorithm Selection and Application

- 4.2.1.7. The IKE_AUTH Exchange

- 4.2.1.8. Traffic Selectors and TS Payloads

- 4.2.1.9. EAP and IKE

- 4.2.1.10. Better-than-Nothing Security (BTNS)

- 4.2.1.11. The CREATE_CHILD_SA Exchange

- 4.2.1.12. The INFORMATIONAL Exchange

- 4.2.1.13. Mobile IKE (MOBIKE)

- 4.2.2. Authentication Header (AH)

- 4.2.3. Encapsulating Security Payload (ESP)

- 4.2.4. Multicast

- 4.2.5. L2TP/IPsec

- 4.2.6. IPsec NAT Traversal

- 4.2.7. IPsec implementations

- 4.2.1. Internet Key Exchange (IKEv2) Protocol

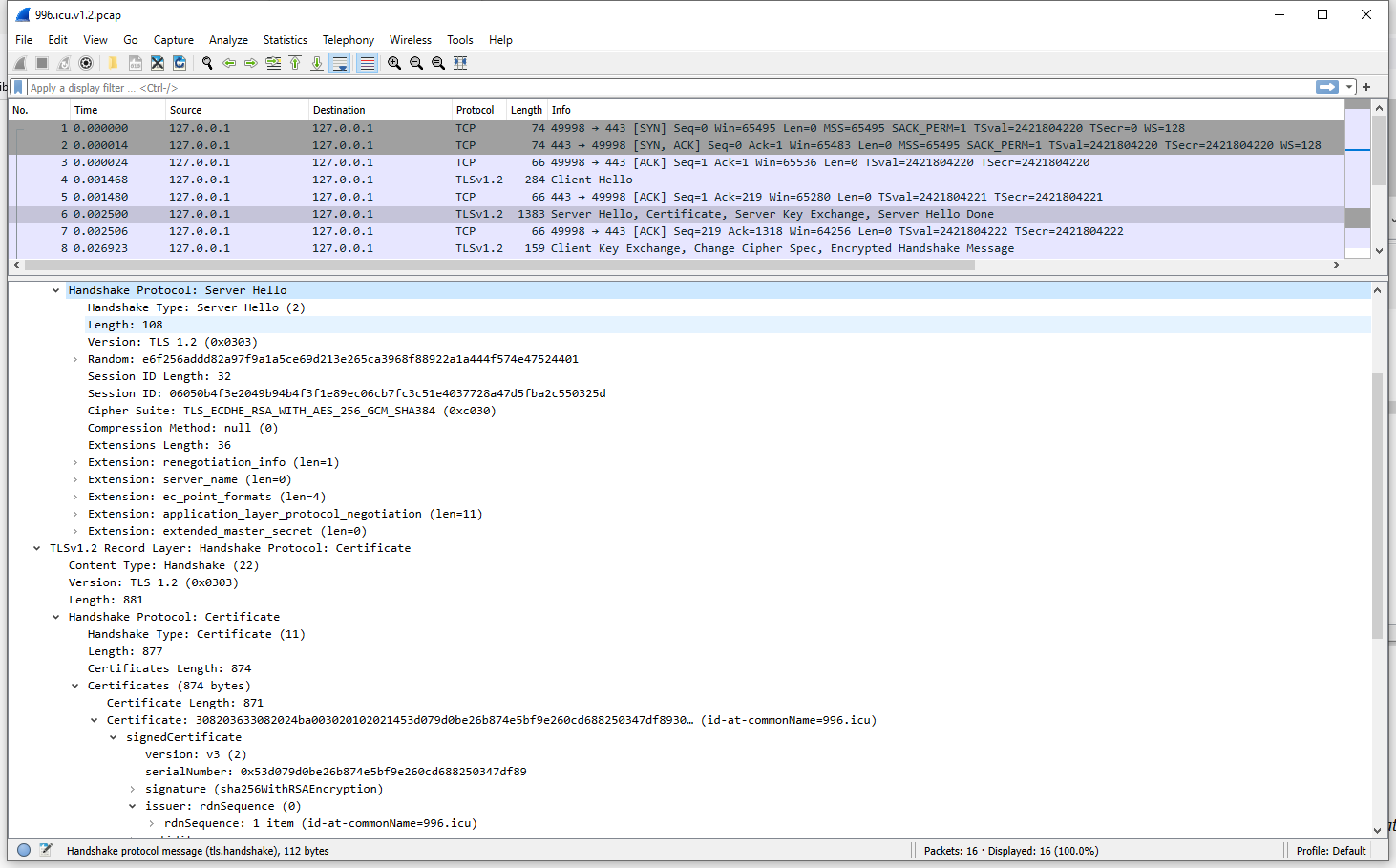

- 4.3. Transport Layer Security (TLS and DTLS)

- 5. DNS Security (DNSSEC)

- Appendix A: FAQs

- A.1. AES, AES-128, AES-192, and AES-256?

- A.2. AES and AES-GCM

- A.3. What’s the SALT and IV in AES?

- A.4. OpenSSL: Cryptography and SSL/TLS Toolkit

- A.5. How to generate an encrypted key for AES using OpenSSL?

- A.6. How to encrypt a message with AES?

- A.7. How to determine if AES cannot decrypt a ciphertext?

- A.8. How to encrypte a message with AES using a specific salt and an IV?

- A.9. EC, ECC, ECDH and ECDSA?

- A.10. RSA, DH, and ECDH

- A.11. Digital signatures and public key encryption/decryption

- A.12. Is it possible to encrypt a message with a private key and decrypt it with the corresponding public key, or vice versa?

- A.13. It seems that the text length of private key is larger than public key in PEM?

- A.14. How does OpenSSL determine the algorithm (RSA or ECC)?

- A.15. How to use OpenSSL to show the ASN.1 OID in a key file?

- A.16. How to derive a shared key using key exchange algorithm?

- A.17. Can I derive a shared key using two different cipher algorithm keys?

- A.18. Can I derive a shared key with RSA?

- A.19. How to compute the digest and sign it from a CSR separately?

- A.20. How to create, issue and verify a certificate?

- Appendix B: FQAs: IPsec

- B.1. IKEv2 (Internet Key Exchange version 2)

- B.2. Transport mode and tunnel mode in IPsec

- B.3. Tunnel mode in IPsec as an overlay network

- B.4. AH (Authentication Header) and ESP (Encapsulating Security Payload) headers

- B.5. The scenarios where using IPsec without confidentiality (i.e., without encryption)

- B.6. IPsec (Internet Protocol Security) and TLS (Transport Layer Security)

- B.7. How to identify the type of an IP datagram, that contains normal IPsec data, an IKEv2 message, or something else?

- B.8. What are IKEv2 and OpenVPN?

- B.9. What’re P2S (Point-to-Site) and S2S (Site-to-Site)?

- Appendix C: Extended Validation Certificate

- References

1. Threats to Network Communication

Attacks can generally be categorized as either passive or active.

-

Passive attacks are mounted by monitoring or eavesdropping on the contents of network traffic, and if not handled they can lead to unauthorized release of information (loss of confidentiality).

-

Active attacks can cause modification of information (with possible loss of integrity) or denial of service (loss of availability).

Logically, such attacks are carried out by an "intruder" or adversary.

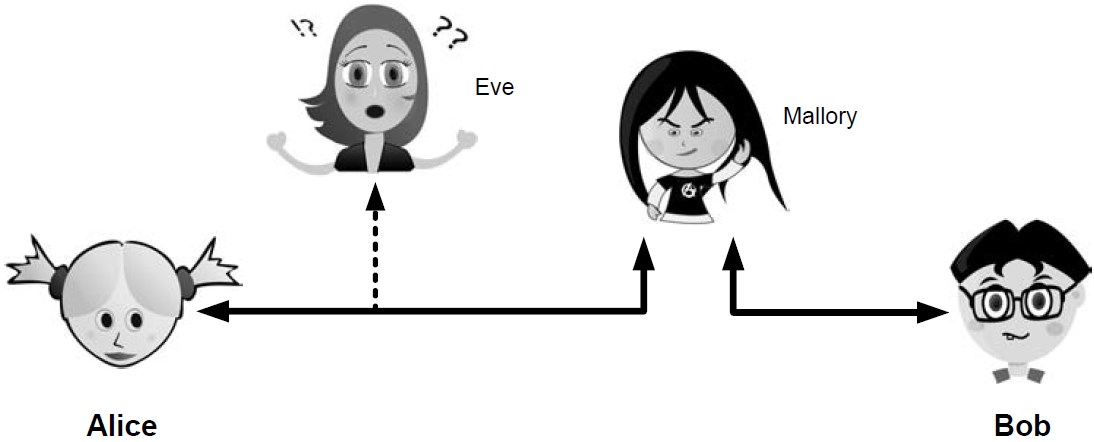

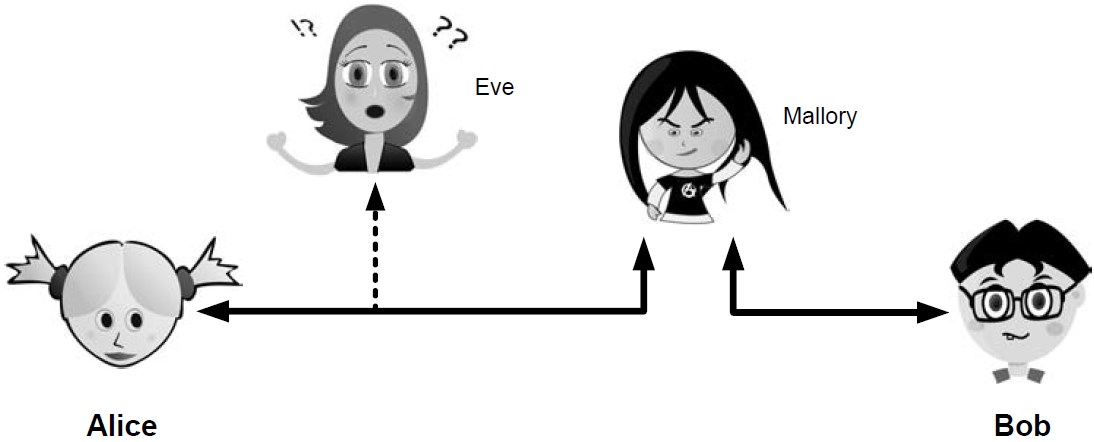

Eve is able to eavesdrop (listen in on, also called capture or sniff) and perform traffic analysis on the traffic passing between Alice and Bob.

-

Capturing the traffic could lead to compromise of confidentiality, as sensitive data may be available to Eve without Alice or Bob knowing.

In addition, traffic analysis can determine the features of the traffic, such as its size and when it is sent, and possibly identify the parties to a communication. This information, although it does not reveal the exact contents of the communication, could also lead to disclosure of sensitive information and could be used to mount more powerful active attacks in the future.

While the passive attacks are essentially impossible for Alice or Bob to detect, Mallory is capable of performing more easily noticed active attacks. These include message stream modification (MSM), denial-of-service (DoS), and spurious association attacks.

-

MSM attacks (including so-called called man-in-the-middle or MITM_attacks) are a broad category and include any way traffic is modified in transit, including deletion, reordering, and content modification.

-

DoS might include deletion of traffic, or generation of such large volumes of traffic so as to overwhelm Alice, Bob, or the communication channel connecting them.

-

Spurious associations include masquerading (Mallory pretends to be Bob or Alice) and replay, whereby Alice or Bob’s earlier (authentic) communications are replayed later, from Mallory’s memory.

| Passive | Active | ||

|---|---|---|---|

Type |

Threats |

Type |

Threats |

Eavesdropping |

Confidentiality |

Message stream modification |

Authenticity, integrity |

Traffic analysis |

Confidentiality |

Denial of service (DoS) |

Availability |

Spurious association |

Authenticity |

||

With effective and careful use of cryptography, passive attacks are rendered ineffective, and active attacks are made detectable (and to some degree preventable).

2. Basic Cryptography and Security Mechanisms

Cryptography evolved from the desire to protect the confidentiality, integrity, and authenticity of information carried through unsecured communication channels.

The use of cryptography, at least in a primitive form, dates back to at least 3500 BCE. The earliest systems were usually codes.

Codes involve substitutions of groups of words, phrases, or sentences with groups of numbers or letters as given in a codebook. Codebooks needed to be kept secret in order to keep communications private, so distributing them required considerable care.

More advanced systems used ciphers, in which both substitution and rearrangement are used.

2.1. Cryptosystems

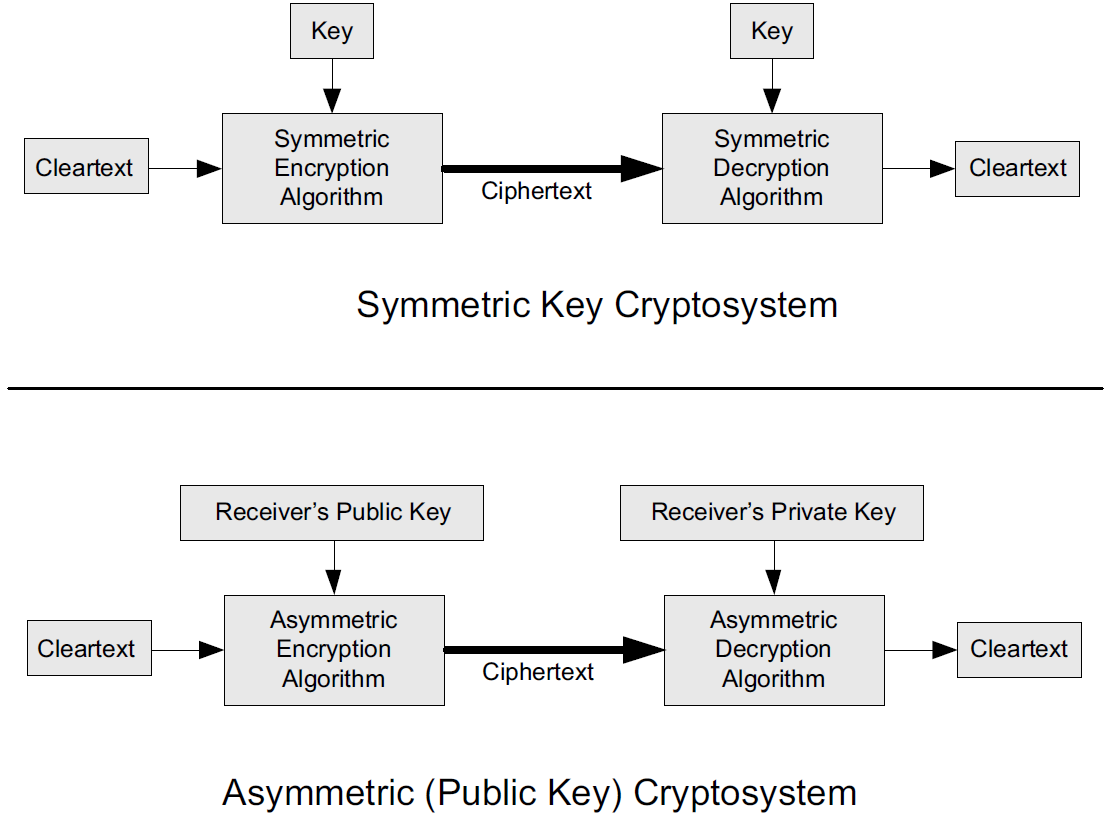

-

In each case, a cleartext message is processed by an encryption algorithm to produce ciphertext (scrambled text).

-

The key is a particular sequence of bits used to drive the encryption algorithm or cipher.

-

With different keys, the same input produces different outputs. Combining the algorithms with supporting protocols and operating methods forms a cryptosystem.

-

In a symmetric cryptosystem, the encryption and decryption keys are typically identical, as are the encryption and decryption algorithms.

-

In an asymmetric cryptosystem, each principal is generally provided with a pair of keys consisting of one public and one private key.

The public key is intended to be known to any party that might want to send a message to the key pair’s owner.

The public and private keys are mathematically related and are themselves outputs of a key generation algorithm.

|

RSA is based on the mathematical properties of large prime numbers and their modular arithmetic, while ECC relies on the algebraic structure of elliptic curves over finite fields. As a result, the key pairs generated for each algorithm are incompatible with each other. |

Without knowing the symmetric key (in a symmetric cryptosystem) or the private key (in a public key cryptosystem), it is (believed to be) effectively impossible for any third party that intercepts the ciphertext to produce the corresponding cleartext. This provides the basis for confidentiality.

For the symmetric key cryptosystem, it also provides a degree of authentication, because only a party holding the key is able to produce a useful ciphertext that can be decrypted to something sensible.

-

A receiver can decrypt the ciphertext, look for a portion of the resulting cleartext to contain a particular agreed-upon value, and conclude that the sender holds the appropriate key and is therefore authentic.

-

Furthermore, most encryption algorithms work in such a way that if messages are modified in transit, they are unable to produce useful cleartext upon decryption.

Thus, symmetric cryptosystems provide a measure of both authentication and integrity protection for messages, but this approach alone is weak. Instead, special forms of checksums are usually coupled with symmetric cryptography to ensure integrity.

A symmetric encryption algorithm is usually classified as either a block cipher or a stream cipher.

-

Block ciphers perform operations on a fixed number of bits (e.g., 64 or 128) at a time,

-

and stream ciphers operate continuously on however many bits (or bytes) are provided as input.

For years, the most popular symmetric encryption algorithm was the Data Encryption Standard (DES), a block cipher that uses 64-bit blocks and 56-bit keys.

Eventually, the use of 56-bit keys was felt to be insecure, and many applications turned to triple-DES (also denoted 3DES or TDES—applying DES three times with two or three different keys to each block of data).

Today, DES and 3DES have been largely phased out in favor of the Advanced Encryption Standard (AES), also known occasionally by its original name the Rijndael algorithm (pronounced “rain-dahl”), in deference to its Belgian cryptographer inventors Vincent Rijmen and Joan Daemen.

Different variants of AES provide key lengths of 128, 192, and 256 bits and are usually written with the corresponding extension (i.e., AES-128, AES-192, and AES-256).

Symmetric-key algorithm: From Wikipedia, the free encyclopedia

Examples of popular symmetric-key algorithms include Twofish, Serpent, AES (Rijndael), Camellia, Salsa20, ChaCha20, Blowfish, CAST5, Kuznyechik, RC4, DES, 3DES, Skipjack, Safer, and IDEA.

When used with asymmetric ciphers for key transfer, pseudorandom key generators are nearly always used to generate the symmetric cipher session keys.

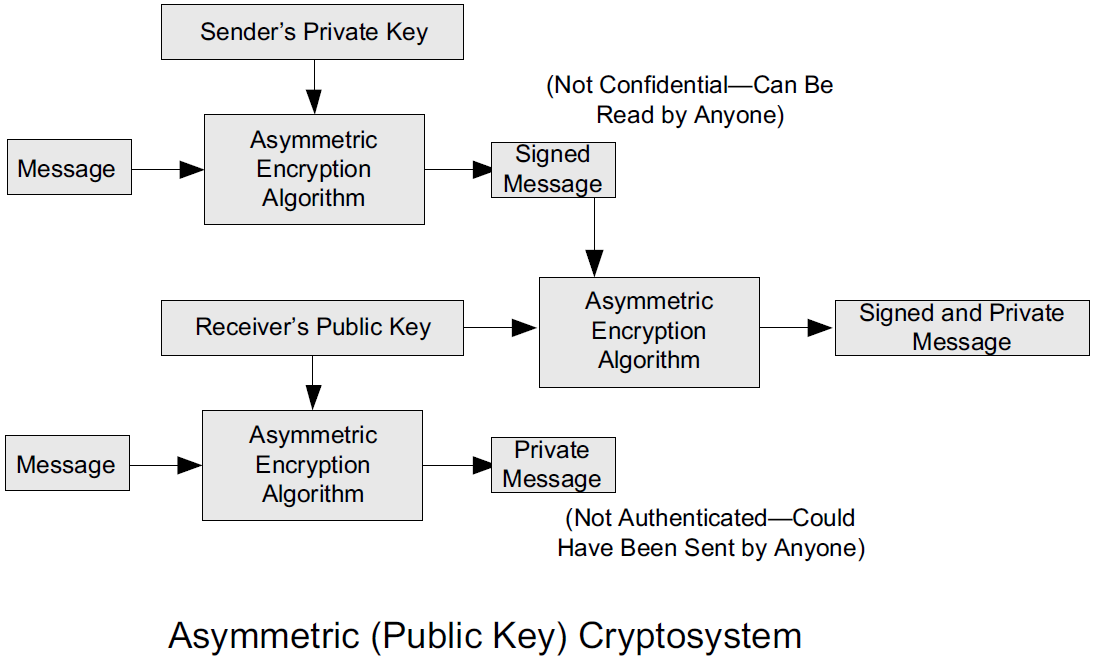

Asymmetric cryptosystems have some additional interesting properties beyond those of symmetric key cryptosystems.

-

Assuming we have Alice as sender and Bob as intended recipient, any third party is assumed to know Bob’s public key and can therefore send him a secret message—only Bob is able to decrypt it because only Bob knows the private key corresponding to his public key.

-

However, Bob has no real assurance that the message is authentic, because any party can create a message and send it to Bob, encrypted in Bob’s public key.

-

Fortunately, public key cryptosystems also provide another function when used in reverse: authentication of the sender.

-

In this case, Alice can encrypt a message using her private key and send it to Bob (or anyone else).

-

Using Alice’s public key (known to all), anyone can verify that the message was authored by Alice and has not been modified.

-

However, it is not confidential because everyone has access to Alice’s public key.

-

To achieve authenticity, integrity, and confidentiality, Alice can encrypt a message using her private key and encrypt the result using Bob’s public key.

-

The result is a message that is reliably authored by Alice and is also confidential to Bob.

Figure 3. The asymmetric cryptosystem can be used for confidentiality (encryption), authentication (digital signatures or signing), or both. When used for both, it produces a signed output that is confidential to the sender and the receiver. Public keys, as their name suggests, are not kept secret.

Figure 3. The asymmetric cryptosystem can be used for confidentiality (encryption), authentication (digital signatures or signing), or both. When used for both, it produces a signed output that is confidential to the sender and the receiver. Public keys, as their name suggests, are not kept secret.

When public key cryptography is used in "reverse" like this, it provides a digital signature.

-

Digital signatures are important consequences of public key cryptography and can be used to help ensure authenticity and nonrepudiation.

-

Only a party possessing Alice’s private key is able to author messages or carry out transactions as Alice.

In a hybrid cryptosystem, elements of both public key and symmetric key cryptography are used.

-

Most often, public key operations are used to exchange a randomly generated confidential (symmetric) session key, which is used to encrypt traffic for a single transaction using a symmetric algorithm.

-

The reason for doing so is performance—symmetric key operations are less computationally intensive than public key operations.

-

Most systems today are of the hybrid type: public key cryptography is used to establish keys used for symmetric encryption of individual sessions.

Public-key cryptography: From Wikipedia, the free encyclopedia

Public-key cryptography, or asymmetric cryptography, is the field of cryptographic systems that use pairs of related keys. Each key pair consists of a public key and a corresponding private key which are generated with cryptographic algorithms based on mathematical problems termed one-way functions.

Figure 4. An unpredictable (typically large and random) number is used to begin generation of an acceptable pair of keys suitable for use by an asymmetric key algorithm.

Figure 4. An unpredictable (typically large and random) number is used to begin generation of an acceptable pair of keys suitable for use by an asymmetric key algorithm.In a public-key encryption system, anyone with a public key can encrypt a message, yielding a ciphertext, but only those who know the corresponding private key can decrypt the ciphertext to obtain the original message.

Figure 5. In an asymmetric key encryption scheme, anyone can encrypt messages using a public key, but only the holder of the paired private key can decrypt such a message. The security of the system depends on the secrecy of the private key, which must not become known to any other.

Figure 5. In an asymmetric key encryption scheme, anyone can encrypt messages using a public key, but only the holder of the paired private key can decrypt such a message. The security of the system depends on the secrecy of the private key, which must not become known to any other.In a digital signature system, a sender can use a private key together with a message to create a signature. Anyone with the corresponding public key can verify whether the signature matches the message, but a forger who does not know the private key cannot find any message/signature pair that will pass verification with the public key.

Figure 6. In this example the message is digitally signed with Alice’s private key, but the message itself is not encrypted. 1) Alice signs a message with her private key. 2) Using Alice’s public key, Bob can verify that Alice sent the message and that the message has not been modified.

Figure 6. In this example the message is digitally signed with Alice’s private key, but the message itself is not encrypted. 1) Alice signs a message with her private key. 2) Using Alice’s public key, Bob can verify that Alice sent the message and that the message has not been modified. Figure 7. In the Diffie–Hellman key exchange scheme, each party generates a public/private key pair and distributes the public key of the pair. After obtaining an authentic (n.b., this is critical) copy of each other’s public keys, Alice and Bob can compute a shared secret offline. The shared secret can be used, for instance, as the key for a symmetric cipher which will be, in essentially all cases, much faster.

Figure 7. In the Diffie–Hellman key exchange scheme, each party generates a public/private key pair and distributes the public key of the pair. After obtaining an authentic (n.b., this is critical) copy of each other’s public keys, Alice and Bob can compute a shared secret offline. The shared secret can be used, for instance, as the key for a symmetric cipher which will be, in essentially all cases, much faster.Examples of well-regarded asymmetric key techniques for varied purposes include:

Diffie–Hellman key exchange protocol

DSS (Digital Signature Standard), which incorporates the Digital Signature Algorithm

ElGamal

Elliptic-curve cryptography

Elliptic Curve Digital Signature Algorithm (ECDSA)

Elliptic-curve Diffie–Hellman (ECDH)

Ed25519 and Ed448 (EdDSA)

X25519 and X448 (ECDH/EdDH)

Various password-authenticated key agreement techniques

Paillier cryptosystem

RSA encryption algorithm (PKCS#1)

Cramer–Shoup cryptosystem

YAK authenticated key agreement protocol

2.2. Rivest, Shamir, and Adleman (RSA) Public Key Cryptography

The most common approach used for both digital signatures and confidentiality is called RSA in deference to its authors' names, Rivest, Shamir, and Adleman. The security of this system hinges on the difficulty of factoring large numbers into constituent primes.

2.3. Diffie-Hellman-Merkle Key Agreement (aka Diffie-Hellman or DH)

The Diffie-Hellman-Merkle Key Agreement protocol (more commonly called simply Diffie-Hellman or DH) provides a method to have two parties agree on a common set of secret bits that can be used as a symmetric key, based on the use of finite field arithmetic.

DH techniques are used in many of the Internet-related security protocols [RFC2631] and are closely related to the RSA approach for public key cryptography.

2.4. Signcryption and Elliptic Curve Cryptography (ECC)

When using RSA, additional security is provided with larger numbers. However, the basic mathematical operations required by RSA (e.g., exponentiation) can be computationally intensive and scale as the numbers grow. Reducing the effort of combining digital signatures and encryption for confidentiality, a class of sign-cryption schemes (also called authenticated encryption) provides both features at a cost less than the sum of the two if computed separately. However, even greater efficiency can sometimes be achieved by changing the mathematical basis for public key cryptography.

In a continuing search for security with greater efficiency and performance, researchers have explored other public key cryptosystems beyond RSA. An alternative based on the difficulty of finding the discrete logarithm of an elliptic curve element has emerged, known as elliptic curve cryptography (ECC, not to be confused with error-correcting code).

For equivalent security, ECC offers the benefit of using keys that are considerably smaller than those of RSA (e.g., by about a factor of 6 for a 1024-bit RSA modulus). This leads to simpler and faster implementations, issues of considerable practical concern.

ECC has been standardized for use in many of the applications where RSA still retains dominance, but adoption has remained somewhat sluggish because of patents on ECC technology held by the Certicom Corporation. (The RSA algorithm was also patented, but patent protection lapsed in the year 2000.)

2.5. Key Derivation and Perfect Forward Secrecy (PFS)

In communication scenarios where multiple messages are to be exchanged, it is common to establish a short-term session key to perform symmetric encryption.

The session key is ordinarily a random number generated by a function called a key derivation function (KDF), based on some input such as a master key or a previous session key. If a session key is compromised, any of the data encrypted with the key is subject to compromise. However, it is common practice to change keys (rekey) multiple times during an extended communication session.

A scheme in which the compromise of one session key keeps future communications secure is said to have perfect forward secrecy (PFS). Usually, schemes that provide PFS require additional key exchanges or verifications that introduce overhead. One example is the STS protocol for DH mentioned earlier.

2.6. Pseudorandom Numbers, Generators, and Function Families

In cryptography, random numbers are often used as initial input values to cryptographic functions, or for generating keys that are difficult to guess. Given that computers are not very random by nature, obtaining true random numbers is somewhat difficult. The numbers used in most computers for simulating randomness are called pseudorandom numbers. Such numbers are not usually truly random but instead exhibit a number of statistical properties that suggest that they are (e.g., when many of them are generated, they tend to be uniformly distributed across some range). Pseudorandom numbers are produced by an algorithm or device known as a pseudorandom number generator (PRNG) or pseudorandom generator (PRG), depending on the author.

Simple PRNGs are deterministic. That is, they have a small amount of internal state initialized by a seed value. Once the internal state is known, the sequence of PNs can be determined.

For example, the common Linear Congruential Generator (LCG) algorithm produces random-appearing values that are entirely predictable if the input parameters are known or guessed. Consequently, LCGs are perfectly fine for use in certain programs (e.g., games that simulate random events) but insufficient for cryptographic purposes.

A pseudorandom function family (PRF) is a family of functions that appear to be algorithmically indistinguishable (by polynomial time algorithms) from truly random functions. A PRF is a stronger concept than a PRG, as a PRG can be created from a PRF.

PRFs are the basis for cryptographically strong (or secure) pseudorandom number generators, called CSPRNGs. CSPRNGs are necessary in cryptographic applications for several purposes, including session key generation, for which a sufficient amount of randomness must be guaranteed [RFC4086].

2.7. Nonces and Salt

A cryptographic nonce is a number that is used once (or for one transaction) in a cryptographic protocol. Most commonly, a nonce is a random or pseudorandom number that is used in authentication protocols to ensure freshness. Freshness is the (desirable) property that a message or operation has taken place in the very recent past.

For example, in a challenge-response protocol, a server may provide a requesting client with a nonce, and the client may need to respond with authentication material as well as a copy of the nonce (or perhaps an encrypted copy of the nonce) within a certain period of time. This helps to avoid replay attacks, because old authentication exchanges that are replayed to the server would not contain the correct nonce value.

A salt or salt value, used in the cryptographic context, is a random or pseudorandom number used to frustrate brute-force attacks on secrets. Brute-force attacks usually involve repeatedly guessing a password, passphrase, key, or equivalent secret value and checking to see if the guess was correct. Salts work by frustrating the checking portion of a brute-force attack.

The best-known example is the way passwords used to be handled in the UNIX system. Users' passwords were encrypted and stored in a password file that all users could read. When logging in, each user would provide a password that was used to double encrypt a fixed value. The result was then compared against the user’s entry in the password file. A match indicated that a correct password was provided.

At the time, the encryption method (DES) was well known and there was concern that a hardware-based dictionary attack would be possible whereby many words from a dictionary were encrypted with DES ahead of time (forming a rainbow table) and compared against the password file. A pseudorandom 12-bit salt was added to perturb the DES algorithm in one of 4096 (nonstandard) ways for each password in an effort to thwart this attack. Ultimately, the 12-bit salt was determined to be insufficient with improved computers (that could guess more values) and was expanded.

| However, there are limitations in the protections that a salt can provide. If the attacker is hitting an online service with a credential stuffing attack, a subset of the brute force attack category, salts won’t help at all because the legitimate server is doing the salting+hashing for you. [auth0-salt-hasing] |

2.8. Cryptographic Hash Functions and Message Digests

In most of the protocols, including Ethernet, IP, ICMP, UDP, and TCP, we have seen the use of a frame check sequence (FCS, either a checksum or a CRC) to determine whether a PDU has likely been delivered without bit errors. When considering security, ordinary FCS functions are not sufficient for this purpose.

A checksum or FCS can be used to verify message integrity if properly constructed using special functions, which are called cryptographic hash functions.

-

The output of a cryptographic hash function H, when provided a message M, is called the digest or fingerprint of the message, H(M).

-

A message digest is a type of strong FCS that is easy to compute and has the following important properties:

-

Preimage resistance: Given H(M), it should be difficult to determine M if not already known.

-

Second preimage resistance: Given H(M1), it should be difficult to determine an M2 ≠ M1 such that H(M1) = H(M2).

-

Collision resistance: It should be difficult to find any pair M1, M2 where H(M1) = H(M2) when M2 ≠ M1.

If a hash function has all of these properties, then if two messages have the same cryptographic hash value, they are, with negligible doubt, the same message.

The two most common cryptographic hash algorithms are at present the Message Digest Algorithm 5 (MD5, [RFC1321]), which produces a 128-bit (16-byte) digest, and the Secure Hash Algorithm 1 (SHA-1), which produces a 160-bit (20-byte) digest.

More recently, a family of functions based on SHA called SHA-2 [RFC6234] produce digests with lengths of 224, 256, 384, or 512 bits (28, 32, 48, and 64 bytes, respectively). Others are under development.

-

|

Cryptographic hash functions are often based on a compression function f, which takes an input of length L and produces a collision-resistant but deterministic output of size less than L. The Merkle-Damgård construction, which essentially breaks an arbitrarily long input into blocks of length L, pads them, passes them to f, and combines the results, produces a cryptographic hash function capable of taking a long input and producing an output with collision resistance. |

2.9. Message Authentication Codes (MACs, HMAC, CMAC, and GMAC)

A message authentication code (unfortunately abbreviated MAC or sometimes MIC but unrelated to the link-layer MAC addresses) can be used to ensure message integrity and authentication. MACs are usually based on keyed cryptographic hash functions, which are like message digest algorithms but require a private key to produce or verify the integrity of a message and may also be used to verify (authenticate) the message’s sender.

MACs require resistance to various forms of forgery.

-

For a given keyed hash function H(M,K) taking input message M and key K, resistance to selective forgery means that it is difficult for an adversary not knowing K to form H(M,K) given a specific M.

-

H(M,K) is resistant to existential forgery if it is difficult for an adversary lacking K to find any previously unknown valid combination of M and H(M,K).

| Note that MACs do not provide exactly the same features as digital signatures. For example, they cannot be a solid basis for nonrepudiation because the secret key is known to more than one party. |

A standard MAC that uses cryptographic hash functions in a particular way is called the keyed-hash message authentication code (HMAC) [FIPS198][RFC2104].

-

The HMAC "algorithm" uses a generic cryptographic hash algorithm, say H(M).

-

To form a t-byte HMAC on message M with key K using H (called HMAC-H), we use the following definition:

HMAC-H (K, M)t = Λt (H((K ⊕ opad)||H((K ⊕ ipad)||M)))

In this definition,

opad(outer pad) is an array containing the value0x5Crepeated|K|times, andipad(inner pad) is an array containing the value0x36repeated|K|times.⊕is the vector XOR operator, and||is the concatenation operator.Normally the HMAC output is intended to be a certain number

tof bytes in length, so the operatorΛt(M)takes the left-mosttbytes ofM.

More recently, other forms of MACs have been standardized, called the cipher-based MAC (CMAC) [FIPS800-38B] and GMAC [NIST800-38D].

-

Instead of using a cryptographic hash function such as HMAC, these use a block cipher such as AES or 3DES.

-

CMAC is envisioned for use in environments where it is more convenient or efficient to use a block cipher in place of a hash function.

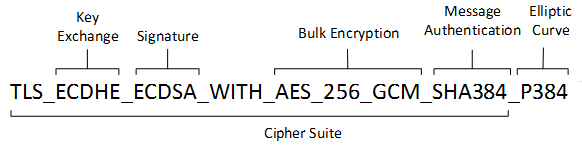

2.10. Cryptographic Suites and Cipher Suites

The combination of the mathematical or cryptographic techniques used in a particular system, especially the Internet protocols, defines not only an enciphering (encryption) algorithm but may also include a particular MAC algorithm, PRF (pseudorandom function family), key agreement algorithm, signature algorithm, and associated key lengths and parameters, are called a cryptographic suite or sometimes a cipher suite, although the first term is more accurate.

$ openssl ciphers -v -s -tls1_3

TLS_AES_256_GCM_SHA384 TLSv1.3 Kx=any Au=any Enc=AESGCM(256) Mac=AEAD

TLS_CHACHA20_POLY1305_SHA256 TLSv1.3 Kx=any Au=any Enc=CHACHA20/POLY1305(256) Mac=AEAD

TLS_AES_128_GCM_SHA256 TLSv1.3 Kx=any Au=any Enc=AESGCM(128) Mac=AEADFrom Wikipedia, the free encyclopedia

A cipher suite is a set of algorithms that help secure a network connection. Suites typically use Transport Layer Security (TLS) or its now-deprecated predecessor Secure Socket Layer (SSL). The set of algorithms that cipher suites usually contain include: a key exchange algorithm, a bulk encryption algorithm, and a message authentication code (MAC) algorithm. [CSWIKIPEDIA]

The key exchange algorithm is used to exchange a key between two devices. This key is used to encrypt and decrypt the messages being sent between two machines. The bulk encryption algorithm is used to encrypt the data being sent. The MAC algorithm provides data integrity checks to ensure that the data sent does not change in transit. In addition, cipher suites can include signatures and an authentication algorithm to help authenticate the server and or client.

Table 2. Algorithms supported in TLS 1.0–1.2 cipher suites Key exchange/agreement Authentication Block/stream ciphers Message authentication RSA

RSA

RC4

Hash-based MD5

Diffie–Hellman

DSA

Triple DES

SHA hash function

ECDH

ECDSA

AES

SRP

IDEA

PSK

DES

Camellia

ChaCha20

For more information about algorithms supported in TLS 1.0–1.2, see also: Transport Layer Security § Applications and adoption

In TLS 1.3, many legacy algorithms that were supported in early versions of TLS have been dropped in an effort to make the protocol more secure.

| Value | Description | DTLS-OK | Recommended | Reference |

|---|---|---|---|---|

0x13,0x01 |

TLS_AES_128_GCM_SHA256 |

Y |

Y |

[RFC8446] |

0xD0,0x05 |

TLS_ECDHE_PSK_WITH_AES_128_CCM_SHA256 |

Y |

Y |

[RFC8442] |

0xD0,0x01 |

TLS_ECDHE_PSK_WITH_AES_128_GCM_SHA256 |

Y |

Y |

[RFC8442] |

0xC0,0x2F |

TLS_ECDHE_RSA_WITH_AES_128_GCM_SHA256 |

Y |

Y |

[RFC5289] |

0xC0,0x2B |

TLS_ECDHE_ECDSA_WITH_AES_128_GCM_SHA256 |

Y |

Y |

[RFC5289] |

Usually, an encryption algorithm is specified by its name and description, how many bits are used for its keys (often a multiple of 128 bits), along with its operating mode.

-

Encryption algorithms that have been standardized for use with Internet protocols include AES, 3DES, NULL [RFC2410], and CAMELLIA [RFC3713].

The NULL encryption algorithm does not modify the input and is used in certain circumstances where confidentiality is not required.

-

The operating mode of an encryption algorithm, especially a block cipher, describes how to use the encryption function for a single block repeatedly (e.g., in a cascade) to encrypt or decrypt an entire message with a single key.

-

When performing encryption using CBC (cipher block chaining) mode, a cleartext block to be encrypted is first XORed with the previous ciphertext block (the first block is XORed with a random initialization vector or IV).

-

Encrypting in CTR (counter) mode involves first creating a value combining a nonce (or IV) and a counter that increments with each successive block to be encrypted.

The combination is then encrypted, the output is XORed with a cleartext block to produce a ciphertext block, and the process repeats for successive blocks.

In effect, this approach uses a block cipher to produce a keystream, a sequence of (random-appearing) bits that are combined (e.g., XORed) with cleartext bits to produce a ciphertext. Doing so essentially converts a block cipher into a stream cipher because no explicit padding of the input is required.

-

CBC requires a serial process for encryption and a partly serial process for decryption, whereas counter mode algorithms allow more efficient fully parallel encryption and decryption implementations. Consequently, counter mode is gaining popularity.

-

In addition, variants of CTR mode (e.g., counter mode with CBC-MAC (CCM), Galois Counter Mode, or GCM) can be used for authenticated encryption [RFC4309], and possibly to authenticate (but not encrypt) additional data (called authenticated encryption with associated data or AEAD) [RFC5116].

-

When an encryption algorithm is specified as part of a cryptographic suite, its name usually includes the mode, and the key length is often implied.

For example, ENCR_AES_CTR refers to AES-128 used in CTR mode.

-

When a PRF (pseudorandom function family) is included in the definition of a cryptographic suite, it is usually based on a cryptographic hash algorithm family such as SHA-2 [RFC6234] or a cryptographic MAC such as CMAC [RFC4434][RFC4615].

For example, the algorithm AES-CMAC-PRF-128 refers to a PRF constructed using a CMAC based on AES-128. It is also written as PRF_AES128_CMAC. The algorithm PRF_HMAC_SHA1 refers to a PRF based on HMAC-SHA1.

Key agreement parameters, when included with an Internet cryptographic suite definition, refer to DH group definitions, as no other key agreement protocol is in widespread use. When DH key agreement is used in generating keys for a particular encryption algorithm, care must be taken to ensure that the keys produced are of sufficient length (strength) to avoid compromising the security of the encryption algorithm.

A signature algorithm is sometimes included in the definition of a cryptographic suite. It may be used for signing a variety of values including data, MACs, and DH values. The most common is to use RSA to sign a hashed value for some block of data, although the digital signature standard (written as DSS or DSA to indicate the digital signature algorithm) [FIPS186-3] is also used in some circumstances. With the advent of ECC, signatures based on elliptic curves (e.g., ECDSA [X9.62-2005]) are also now supported in many systems.

The concept of a cryptographic suite evolved in the context of Internet security protocols because of a need for modularity and decoupled evolution.

-

As computational power has improved, older cryptographic algorithms and smaller key lengths have fallen victim to various forms of brute-force attacks.

-

In some cases, more sophisticated attacks have revealed flaws that necessitate the replacement of the underlying mathematical and cryptographic methods, but the basic protocol machinery is otherwise sound.

-

As a result, the choice of a cryptographic suite can now be made separately from the communication protocol details and depends on factors such as convenience, performance, and security.

-

Protocols tend to make use of the components of a cryptographic suite in a standard way, so an appropriate cryptographic suite can be “snapped in” when deemed appropriate.

3. Certificates, Certificate Authorities (CAs), and PKIs

Key management, how keys are created, exchanged, and revoked, remains one of the greatest challenges in deploying cryptographic systems on a widespread basis across multiple administrative domains.

One of the challenges with public key cryptosystems is to determine the correct public key for a principal or identity.

In our running example, if Alice were to send her public key to Bob, Mallory could modify it in transit to be her own public key, and Bob (called the relying party here) might unknowingly be using Mallory’s key, thinking it is Alice’s. This would allow Mallory to effectively masquerade as Alice.

To address this problem, a public key certificate is used to bind an identity to a particular public key using a digital signature.

At first glance, this presents a certain “chicken-egg” problem: How can a public key become signed if the digital signature itself requires a reliable public key?

One model, called a web of trust, involves having a certificate (identity/key binding) endorsed by a collection of existing users (called endorsers).

-

An endorser signs a certificate and distributes the signed certificate.

The more endorsers for a certificate over time, the more reliable it is likely to be.

An entity checking a certificate might require some number of endorsers or possibly some particular endorsers to trust the certificate.

-

The web of trust model is decentralized and “grassroots” in nature, with no central authority. This has mixed consequences.

Having no central authority suggests that the scheme will not collapse because of a single point of failure, but it also means that a new entrant may experience some delay in getting its key endorsed to a degree sufficient to be trusted by a significant number of users.

-

The web of trust model was first described as part of the Pretty Good Privacy (PGP) encryption system for electronic mail [NAZ00], which has evolved to support a standard encoding format called OpenPGP, defined by [RFC4880].

A more formal approach, which has the added benefit of being provably secure under certain theoretical assumptions in exchange for more dependence on a centralized authority, involves the use of a public key infrastructure (PKI).

-

A PKI is a service that operates with a collection of certificate authorities (CAs) responsible for creating, revoking, distributing, and updating key pairs and certificates.

-

A CA is an entity and service set up to manage and attest to the bindings between identities and their corresponding public keys. There are several hundred commercial CAs.

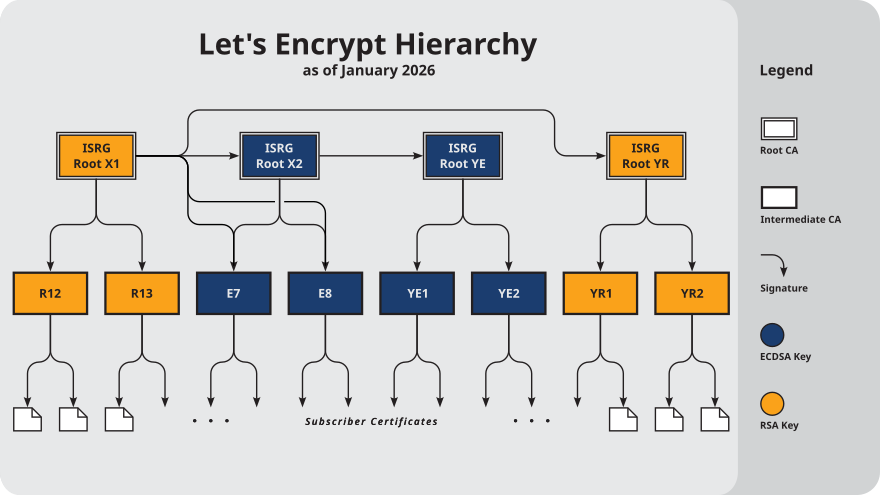

A CA usually employs a hierarchical signing scheme. This means that a public key may be signed using a parent key which is in turn signed by a grand-parent key, and so on. Ultimately a CA has one or more root certificates upon which many subordinate certificates depend for trust.

Figure 10. Let’s Encrypt’s Hierarchy as of August 2021

Figure 10. Let’s Encrypt’s Hierarchy as of August 2021 -

An entity that is authoritative for certificates and keys (e.g., a CA) is called a trust anchor, although this term is also used to describe the certificates or other cryptographic material associated with such entities [RFC6024].

3.1. Public Key Certificates, Certificate Authorities, and X.509

While several types of certificates have been used in the past, the one of most interest to us is based on an Internet profile of the ITU-T X.509 standard [RFC5280].

In addition, any particular certificate may be stored and exchanged in a number of file or encoding formats. The most common ones include DER, PEM (a Base64 encoded version of DER), PKCS#7 (P7B), PKCS#12 (PFX), and PKCS#1 [RFC3447].

Today, Internet PKI-related standards tend to use the cryptographic message syntax [RFC5652], which is based on PKCS#7 version 1.5.

Certificates are primarily used in identifying four types of entities on the Internet: individuals, servers, software publishers, and CAs. Certificate classes are primarily a convenience for grouping and naming types of certificates and for defining different security policies associated with them.

In practice, systems requiring public key operations have root certificates for popular CAs installed at configuration time (e.g., Microsoft Internet Explorer, Mozilla’s Firefox, and Google’s Chrome are all capable of accessing a preconfigured database of root certificates), to solve the chicken-egg PKI bootstrapping problem.

The openssl command, available for most common platforms including Linux and Windows, allows us to see the certificates for a Web site:

$ openssl version -d

OPENSSLDIR: "/usr/lib/ssl"

$ openssl s_client -CApath /usr/lib/ssl/certs/ -connect www.digicert.com:443 > digicert.out 2>1

^C (to interrupt)-

The first command determines where the local system stores its preconfigured CA certificates. This is usually a directory that varies by system.

-

The next makes a connection to the HTTPS port (443) on the

www.digicert.comserver and redirect the output to thedigicert.outfile. -

The

opensslcommand takes care to print the entity identified by each of the certificates, and at what depth they are in the certificate hierarchy relative to the root (depth 0 is the server’s certificate, so the depth numbers are counted bottom to top).$ head digicert.out CONNECTED(00000003) --- Certificate chain 0 s:jurisdictionC = US, jurisdictionST = Utah, businessCategory = Private Organization, serialNumber = 5299537-0142, C = US, ST = Utah, L = Lehi, O = "DigiCert, Inc.", CN = www.digicert.com i:C = US, O = DigiCert Inc, CN = DigiCert EV RSA CA G2 a:PKEY: rsaEncryption, 2048 (bit); sigalg: RSA-SHA256 v:NotBefore: Jun 26 00:00:00 2023 GMT; NotAfter: Jun 25 23:59:59 2024 GMT 1 s:C = US, O = DigiCert Inc, CN = DigiCert EV RSA CA G2 i:C = US, O = DigiCert Inc, OU = www.digicert.com, CN = DigiCert Global Root G2 a:PKEY: rsaEncryption, 2048 (bit); sigalg: RSA-SHA256 -

It also checks the certificates against the stored CA certificates to see if they verify properly.

In this case, they do, as indicated by “verify return” having value

0(ok).$ grep 'return code' digicert.out Verify return code: 0 (ok)

To get the certificate into a more usable form, we can extract the certificate data, convert it, and place the result into a PEM-encoded certificate file:

$ openssl x509 -in digicert.out -out digicert.pemGiven the certificate in PEM format, we can now use a variety of openssl functions to manipulate and inspect it. At the highest level, the certificate includes some data to be signed (called the To Be Signed (TBS) certificate) followed by a signature algorithm identifier and signature value.

$ openssl x509 -in digicert.pem -text

Certificate:

Data:

Version: 3 (0x2)

Serial Number:

09:fc:b7:40:3f:fd:79:b6:8f:e2:4f:74:80:5f:5d:00

Signature Algorithm: sha256WithRSAEncryption

Issuer: C = US, O = DigiCert Inc, CN = DigiCert EV RSA CA G2

Validity

Not Before: Jun 26 00:00:00 2023 GMT

Not After : Jun 25 23:59:59 2024 GMT

Subject: jurisdictionC = US, jurisdictionST = Utah, businessCategory = Private Organization, serialNumber = 5299537-0142, C = US, ST = Utah, L = Lehi, O = "DigiCert, Inc.", CN = www.digicert.com

Subject Public Key Info:

Public Key Algorithm: rsaEncryption

Public-Key: (2048 bit)

Modulus:

00:98:df:33:59:c1:3b:a7:38:8c:5d:9e:2f:e3:cf:

...

c0:ca:25:49:9d:45:d0:67:7e:d9:78:c9:0e:34:95:

88:39

Exponent: 65537 (0x10001)

X509v3 extensions:

X509v3 Authority Key Identifier:

6A:4E:50:BF:98:68:9D:5B:7B:20:75:D4:59:01:79:48:66:92:32:06

X509v3 Subject Key Identifier:

D4:38:B0:9D:E2:63:52:91:C7:82:03:F0:1F:00:CE:EE:A0:FA:B7:93

X509v3 Subject Alternative Name:

DNS:www.digicert.com, DNS:digicert.com, DNS:admin.digicert.com, DNS:api.digicert.com, DNS:content.digicert.com, DNS:order.digicert.com, DNS:login.digicert.com, DNS:ws.digicert.com

X509v3 Key Usage: critical

Digital Signature, Key Encipherment

X509v3 Extended Key Usage:

TLS Web Server Authentication, TLS Web Client Authentication

X509v3 CRL Distribution Points:

Full Name:

URI:http://crl3.digicert.com/DigiCertEVRSACAG2.crl

Full Name:

URI:http://crl4.digicert.com/DigiCertEVRSACAG2.crl

X509v3 Certificate Policies:

Policy: 2.16.840.1.114412.2.1

Policy: 2.23.140.1.1

CPS: http://www.digicert.com/CPS

Authority Information Access:

OCSP - URI:http://ocsp.digicert.com

CA Issuers - URI:http://cacerts.digicert.com/DigiCertEVRSACAG2.crt

X509v3 Basic Constraints:

CA:FALSE

CT Precertificate SCTs:

Signed Certificate Timestamp:

Version : v1 (0x0)

Log ID : 76:FF:88:3F:0A:B6:FB:95:51:C2:61:CC:F5:87:BA:34:

B4:A4:CD:BB:29:DC:68:42:0A:9F:E6:67:4C:5A:3A:74

Timestamp : Jun 26 17:26:00.704 2023 GMT

Extensions: none

Signature : ecdsa-with-SHA256

30:46:02:21:00:89:EB:FD:DB:D0:80:4F:31:30:73:D8:

...

27:74:33:78:C4:AC:AF:18

Signed Certificate Timestamp:

Version : v1 (0x0)

Log ID : 48:B0:E3:6B:DA:A6:47:34:0F:E5:6A:02:FA:9D:30:EB:

1C:52:01:CB:56:DD:2C:81:D9:BB:BF:AB:39:D8:84:73

Timestamp : Jun 26 17:26:00.754 2023 GMT

Extensions: none

Signature : ecdsa-with-SHA256

30:44:02:20:79:AB:36:3F:F9:22:B1:E1:2D:F4:57:16:

...

55:46:5E:B2:83:16

Signed Certificate Timestamp:

Version : v1 (0x0)

Log ID : 3B:53:77:75:3E:2D:B9:80:4E:8B:30:5B:06:FE:40:3B:

67:D8:4F:C3:F4:C7:BD:00:0D:2D:72:6F:E1:FA:D4:17

Timestamp : Jun 26 17:26:00.748 2023 GMT

Extensions: none

Signature : ecdsa-with-SHA256

30:44:02:20:3A:F4:92:55:82:0E:1D:06:A6:21:90:C3:

...

CB:3A:14:83:07:27

Signature Algorithm: sha256WithRSAEncryption

Signature Value:

5d:f7:f6:45:62:22:7e:93:dc:9e:5a:62:2b:3c:8a:f1:06:9b:

...

e6:4d:4e:9f

-----BEGIN CERTIFICATE-----

MIIHbDCCBlSgAwIBAgIQCfy3QD/9ebaP4k90gF9dADANBgkqhkiG9w0BAQsFADBE

...

qL35PG7dfEKrx6fD8xlYnWOYSnqNet6EZBCFe+ZNTp8=

-----END CERTIFICATE-----The decoded version of the certificate followed by an ASCII (PEM) representation of the certificate (between the BEGIN CERTIFICATE and END CERTIFICATE indicators) shows a data portion and a signature portion.

Within the data portion is some metadata including:

-

a Version field, indicating the particular X.509 certificate type (

3, the most recent, is encoded using hex value0x02), -

a Serial Number of the particular certificate, a number assigned by the CA unique to each certificate,

-

and a Validity field that gives the time during which the certificate should be treated as legitimate, starting with the Not Before subfield and ending with the Not After subfield.

-

The certificate metadata also indicates which signature algorithm is used to sign the data portion.

In this case (i.e.

sha256WithRSAEncryption), it is signed by computing a hash using SHA-2 and signing the result using RSA. The signature itself appears at the end of the certificate. -

The Issuer field indicates the distinguished name (jargon from the ITU-T X.500 standard) of the entity that issued the certificate and may have these special subfields (based on X.501): C (country), L (locale or city), O (organization), OU (organizational unit), ST (state or province), CN (common name).

-

The Subject field identifies the entity this certificate is about, and the owner of the public key contained in the subsequent Subject Public Key Info field.

In this example, the Subject field is a somewhat complex structure like the Issuer field and contains multiple object IDs (OIDs) [ITUOID]. Most are decoded with names (e.g., O, C, ST, L, CN), but some are not because the particular version of

opensslthat printed the output did not understand them.Note that the CN subfield tends to be an important one when identifying subjects and issuers for certificates used on the Internet.

For this certificate, it gives the correct matching name for the server (along with any names included in the Subject Alternative Name (SAN) extension). Nonmatching names or URLs (e.g.,

https://digicert.cominstead ofhttps://www.digicert.com) referring to the same server, when accessed, is also ok.Note that CN is not really the field for holding a DNS name; SANs are intended for this purpose. When a certificate needs to be validated, a recursive process works up the certificate hierarchy to a root CA certificate by matching the issuer distinguished name in one certificate with the subject name in another.

In this case, the certificate was issued by

DigiCert EV RSA CA G2(the issuer’s CN subfield). Assuming all certificates are current in their validity periods and are being used in appropriate ways, some parent certificate (immediate parent, grandparent, etc., but usually a root CA certificate) to the Subject field of the certificate we are evaluating must be trusted for validation to be successful.-

The Subject Public Key Info field gives the algorithm and public key belonging to the entity specified in the Subject field.

In this case, the public key is an RSA public key with a 2048-bit modulus and public exponent of 65537. The subject is in possession of the matching RSA private key (modulus plus private exponent) that is paired to the public key. If the private key is compromised, or if the public key needs to be changed for other reasons, the public and private keys must be regenerated and a new certificate issued. The old certificate is then revoked.

-

-

Version 3 X.509 certificates may include zero or more extensions.

Extensions are either critical or noncritical, and some are required by the Internet profile in [RFC5280]. If critical, an extension must be processed and found acceptable by the relying party’s (CPS jargon) policy. Noncritical extensions are processed if supported but do not otherwise cause errors.

-

The Basic Constraints extension, a critical extension, indicates whether the certificate is a CA certificate.

In this case it is not, so it cannot be used for signing other certificates. A certificate indicating that it is a CA certificate may be used in a certificate validation chain at a location other than a leaf. This is common for root CA certificates or for other certificate-signing certificates (“intermediate” certificates, such as the

DigiCert EV RSA CA G2certificate referenced in this example). -

The Subject Key Identifier extension identifies the public key in the certificate.

It allows different keys owned by the same subject to be differentiated.

-

The Key Usage extension, a critical extension, determines the valid usage for the key.

Possible usages include digital signature, nonrepudiation (content commitment), key encipherment, data encipherment, key agreement, certificate signing, CRL signing, encipher only, and decipher only.

Because server certificates of this kind are primarily used for identifying the two endpoints of a connection and encrypting a session key, the possible usages may be somewhat limited, as in this case.

-

The Extended Key Usage extension, which may be critical or noncritical, may provide further restrictions on the key use.

Possible values of this extension when used in the Internet profile include the following: TLS client and server authentication, signing of downloadable code, e-mail protection (nonrepudiation and key agreement or encipherment), various IPsec operating modes, and timestamping.

-

The SAN extension allows a single certificate to be used for multiple purposes (e.g., for multiple Web sites with distinct DNS names).

This alleviates the need to have a separate certificate for each Web site, which can significantly reduce cost and administrative burden.

In this case, the certificate can be used for either of the DNS names

www.digicert.comorcontent.digicert.com(and alsodigicert.com), and so on. -

The CRL Distribution Points (CDP) extension gives a list of URLs for finding the CA’s certificate revocation list (CRL), a list of revoked certificates used to determine if a certificate in a validation chain has been revoked.

-

The Certificate Policies (CP) extension includes certificate policies applicable to the certificate [RFC5280].

In this example, the CP extension contains three qulifiers, that is, two policies, and a CPS qualifier. The Policy value of

2.16.840.1.114412.2.1, a DigiCert Object Identifier (OID), and the Policy value of2.23.140.1.1, a CABF OID, both indicate that the certificate complies with an EV policy. The CPS qualifier gives a pointer to the URI where the particular applicable CPS for the policy may be found. -

The Authority Key Identifier identifies the public key corresponding to the private key used to sign the certificate. It is useful when an issuer has multiple private keys used for generating signatures.

-

The Authority Information Access (AIA) extension indicates where information may be retrieved from the CA.

In this case, it indicates a URI used to determine if the certificate has been revoked using an online query protocol. It also indicates the list of CA issuers, which includes a URL containing the CA certificate responsible for signing the example server certificate.

-

Following the extensions, the certificate contains the signature portion. It contains the identification of the signature algorithm (SHA-2 with RSA here), which must match the Signature Algorithm field we encountered earlier.

In this case, the signature itself is a 256-byte value, corresponding to the 2048-bit modulus used for this use of RSA.

-

3.2. Validating and Revoking Certificates

Within the IETF, [RFC5280] defines the use of X.509 version 3 certificates with X.509 version 2 CRLs for the Internet that a certificate may have to be revoked and possibly replaced with a freshly issued certificate.

To validate a certificate, a validation or certification path must be established that includes a set of validated certificates, usually up to some trust anchor (e.g., root certificate) that is already known to the relying party. One of the key steps involves determining if one or more of the certificates in a chain have been revoked. If so, the path validation fails.

In the Internet, there are two primary ways to ensure that entities that wish to use a certificate become aware if it has been revoked: CRLs and the Online Certificate Status Protocol (OCSP) [RFC2560].

When the CRL Distribution Point extension includes an HTTP or FTP URI scheme, as it does in the preceding example, the complete URL gives the name of a file encoded in DER format containing an X.509 CRL. In our example, we can retrieve the CRL corresponding to the certificate using the following command:

$ wget -q http://crl3.digicert.com/DigiCertEVRSACAG2.crland print it out as follows:

$ openssl crl -inform DER -in DigiCertEVRSACAG2.crl -text

Certificate Revocation List (CRL):

Version 2 (0x1)

Signature Algorithm: sha256WithRSAEncryption

Issuer: C = US, O = DigiCert Inc, CN = DigiCert EV RSA CA G2

Last Update: Jul 31 19:48:27 2023 GMT

Next Update: Aug 7 19:48:27 2023 GMT

CRL extensions:

X509v3 Authority Key Identifier:

6A:4E:50:BF:98:68:9D:5B:7B:20:75:D4:59:01:79:48:66:92:32:06

X509v3 CRL Number:

1121

Revoked Certificates:

Serial Number: 06AA5017961021B47CA95CE01C312405

Revocation Date: Jul 8 17:31:01 2022 GMT

Serial Number: 02FDC9206F81D00E3311F7B6D920B1A2

Revocation Date: Jul 13 15:19:23 2022 GMT

...

Serial Number: 0C2C2310AFDFF58F2E4A6454FA7B7801

Revocation Date: Jul 31 17:32:07 2023 GMT

Signature Algorithm: sha256WithRSAEncryption

Signature Value:

1f:ee:29:c7:fa:46:03:85:4a:cc:e0:c4:0b:9d:cd:cf:ea:4c:

...

27:ca:42:1b

-----BEGIN X509 CRL-----

MIMCHE8wgwIbNgIBATANBgkqhkiG9w0BAQsFADBEMQswCQYDVQQGEwJVUzEVMBMG

...

3gwZtF3ABgkVW2jJCbM5+tDZzf/jSapQ3fOoPMNqCEknykIb

-----END X509 CRL-----Here we can see the format of an X.509 v2 CRL.

-

The format is very similar to that of a certificate, and the entire message is signed by a CA as certificates are.

This is useful because CRLs can be distributed like certificates: using otherwise untrusted communication channels and servers.

-

In comparison with a certificate, the validity period is replaced by a list of the previous and next CRL updates.

-

There is no subject and no public key but instead a list of serial numbers for revoked certificates plus the time and reason for revocation.

-

There may also be CRL extensions that are unique to CRLs.

In this example, the Authority Key Identifier extension gives a number identifying the key used by the CA in signing the CRL. The CRL Number extension gives the sequence number of the CRL. Other values are given in [RFC5280].

OCSP (Online Certificate Status Protocol), the other primary method for determining if a certificate has been revoked, is an application-level request/response protocol usually operated over HTTP (i.e., using the HTTP protocol with TCP/IP on TCP port 80).

-

An OCSP request includes information identifying a particular certificate, plus some optional extensions. A response indicates whether the certificate is not revoked, unknown, or revoked. An error may be returned if the request cannot be parsed or otherwise acted upon.

-

The key used for signing the OCSP response need not necessarily match the key used to sign the original certificate. This is possible if the issuer included a Key Usage extension indicating an alternate OCSP provider.

-

To see an OCSP request/response exchange, we can execute the following commands:

$ # CONNECTED COMMANDS: Q End the current SSL connection and exit. $ echo "Q" | \ > openssl s_client -connect www.digicert.com:443 2>1 | openssl x509 -out DigiCert.pem $ echo "Q" | \ > openssl s_client -connect www.digicert.com:443 2>1 | openssl x509 -noout -subject -issuer -ext authorityInfoAccess subject=jurisdictionC = US, jurisdictionST = Utah, businessCategory = Private Organization, serialNumber = 5299537-0142, C = US, ST = Utah, L = Lehi, O = "DigiCert, Inc.", CN = www.digicert.com issuer=C = US, O = DigiCert Inc, CN = DigiCert EV RSA CA G2 Authority Information Access: OCSP - URI:http://ocsp.digicert.com CA Issuers - URI:http://cacerts.digicert.com/DigiCertEVRSACAG2.crt $ wget -q http://cacerts.digicert.com/DigiCertEVRSACAG2.crt $ CA=DigiCertEVRSACAG2.crt $ CERT=DigiCert.pem $ OSCPURL=http://ocsp.digicert.com $ openssl ocsp -issuer $CA -cert $CERT -url $OSCPURL -VAfile $CA -no_nonce -text OCSP Request Data: Version: 1 (0x0) Requestor List: Certificate ID: Hash Algorithm: sha1 Issuer Name Hash: D613075FB6DEA11BDF0182D397E1D37C6E925509 Issuer Key Hash: 6A4E50BF98689D5B7B2075D45901794866923206 Serial Number: 09FCB7403FFD79B68FE24F74805F5D00 OCSP Response Data: OCSP Response Status: successful (0x0) Response Type: Basic OCSP Response Version: 1 (0x0) Responder Id: 6A4E50BF98689D5B7B2075D45901794866923206 Produced At: Aug 1 20:19:18 2023 GMT Responses: Certificate ID: Hash Algorithm: sha1 Issuer Name Hash: D613075FB6DEA11BDF0182D397E1D37C6E925509 Issuer Key Hash: 6A4E50BF98689D5B7B2075D45901794866923206 Serial Number: 09FCB7403FFD79B68FE24F74805F5D00 Cert Status: good This Update: Aug 1 20:03:02 2023 GMT Next Update: Aug 8 19:03:02 2023 GMT Signature Algorithm: sha256WithRSAEncryption Signature Value: 49:59:d8:0f:6c:e4:12:41:ab:0e:7a:4a:ad:94:7c:20:04:5e: ... bf:cf:a4:ad:95:2b:4b:16:f8:8c:61:79:63:48:42:57:d3:d2: 21:6a:d3:fe Response verify OK DigiCert.pem: good This Update: Aug 1 20:03:02 2023 GMT Next Update: Aug 8 19:03:02 2023 GMT-

The request included the identification of a hash algorithm (SHA-1), a hash of the issuer name, a number identifying the issuer’s key (the same as the Authority Key Identifier extension in the certificate), plus the certificate’s serial number.

-

The responder, identified by the responder ID, identifies itself and signs the response. The response includes the hashes and numbers from the request, as well as the certificate status of “good” (i.e., not revoked).

OCSP-based revocation is not an effective technique to mitigate against the compromise of an HTTPS server’s private key. [OCSPWIKIPEDIA] -

3.3. Attribute Certificates

In addition to public key certificates (PKCs) used to bind names to public keys, X.509 defines another type of certificate called an attribute certificate (AC).

-

ACs are similar in structure to PKCs but lack a public key.

-

They are used to indicate other information, including authorization information that may have a lifetime different from (e.g., shorter than) a corresponding PKC [RFC5755].

-

ACs contain other structures similar to PKCs, including extensions and AC policies.

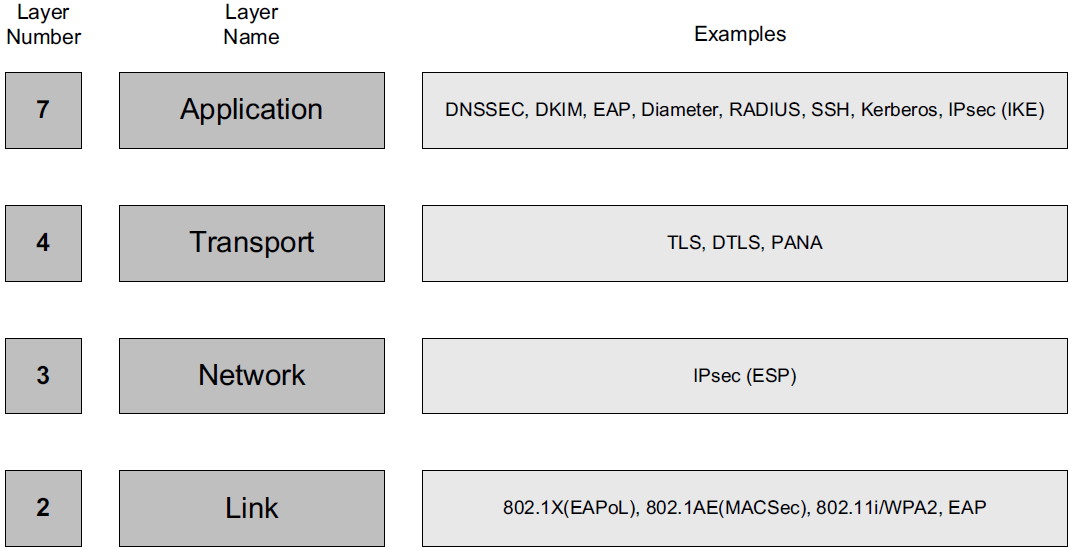

4. TCP/IP Security Protocols and Layering

Protocols involving cryptography can (and do) exist at a number of different layers in the protocol stack.

-

Security services at the link layer protect information only as it flows across a single communication hop,

-

security at the network layer protects information flowing between hosts,

-

security at the transport layer protects process-to-process communication, and

-

security at the application layer protects information manipulated by applications.

It is also possible to protect the data manipulated by applications independently of the communication layers (e.g., files can be encrypted and sent as e-mail attachments).

TLS and IPsec are the most prevalent, as TLS is used with all secure Web communications (HTTPS) and IPsec is used with most network-layer security, including VPNs.

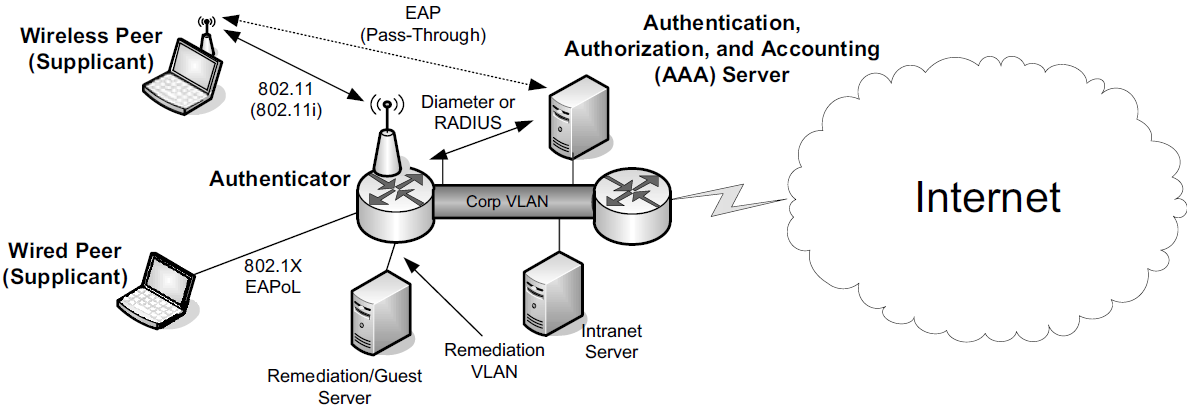

4.1. Network Access Control: 802.1X, 802.1AE, EAP, and PANA

Network Access Control (NAC) refers to methods used to authorize or deny network communications to particular systems or users.

Defined by the IEEE, the 802.1X Port-Based Network Access Control (PNAC) standard is commonly used with TCP/IP networks to support LAN security in enterprises, for both wired and wireless networks.

Used in conjunction with the IETF standard Extensible Authentication Protocol (EAP) [RFC3748], 802.1X is sometimes called EAP over LAN (EAPoL).

In 802.1X, the protocol between the supplicant and the authenticator is divided into a lower and upper sublayer. The lower layer is called the port access control protocol (PACP). The higher layer is ordinarily some variant of EAP. For use with 802.1AR (X.509 certificates for secure device identities), the variant is called EAP-TLS [RFC5216]. PACP uses EAPoL frames for communication, even if EAP authentication is not used (e.g., when MKA is used). EAPoL frames use an Ethertype field value of 0x888E.

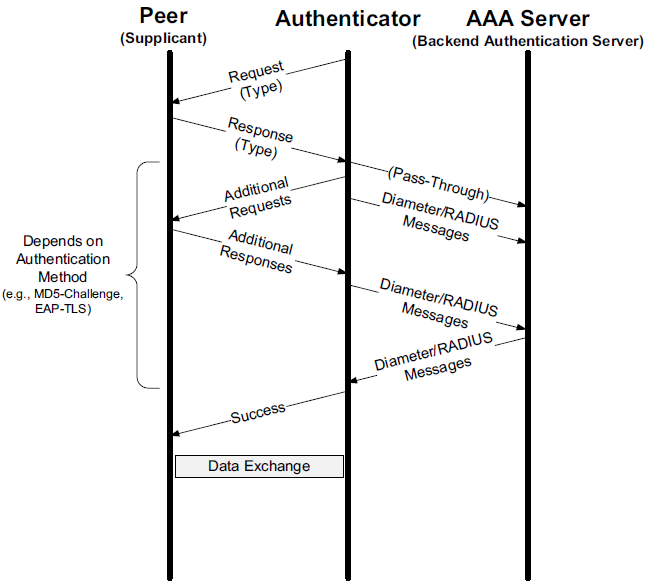

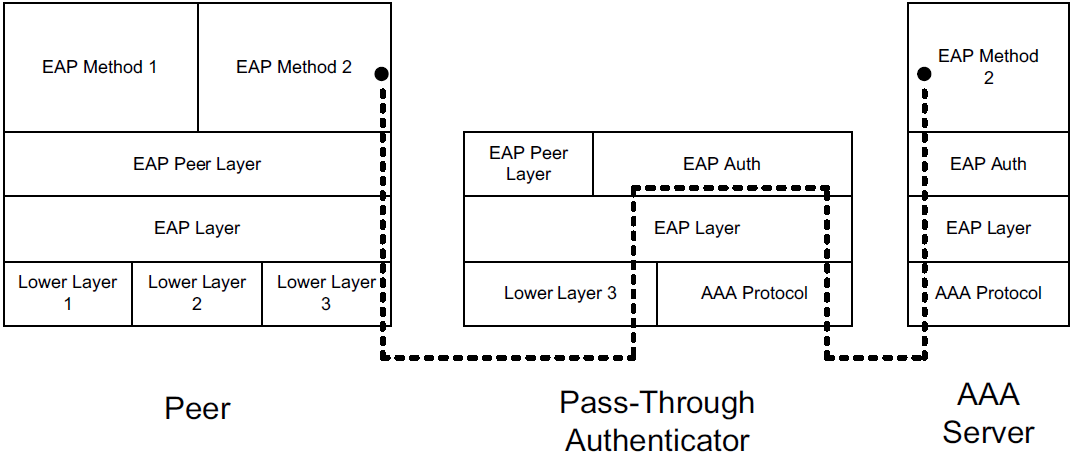

Moving to IETF standards, EAP is not a single protocol but rather a framework for achieving authentication using a combination of other protocols, such as TLS and IKEv2.

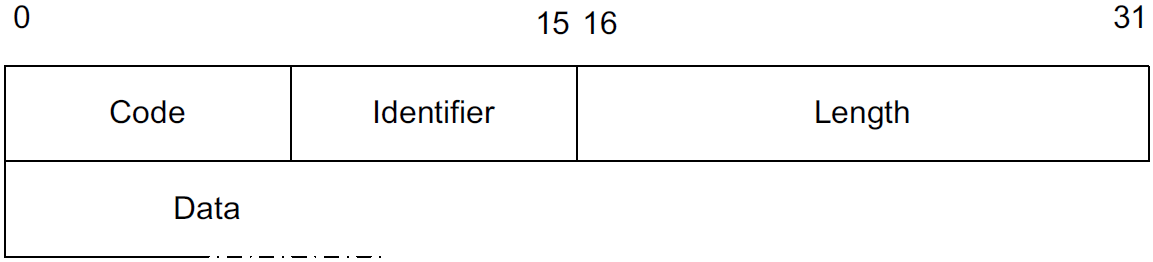

Code field for demultiplexing packet types (Request, Response, Success, Failure, Initiate, Finish). The Identifier helps match requests to responses. For request and response messages, the first data byte is a Type field. The Length field gives the number of bytes in the EAP message, including the Code, Identifier, and Length fields.

EAP is a layered architecture that supports its own multiplexing and demultiplexing. Conceptually, it consists of four layers: the lower layer (for which there are multiple protocols), EAP layer, EAP peer/authenticator layer, and EAP methods layer (for which there are many methods).

4.2. Layer 3 IP Security (IPsec)

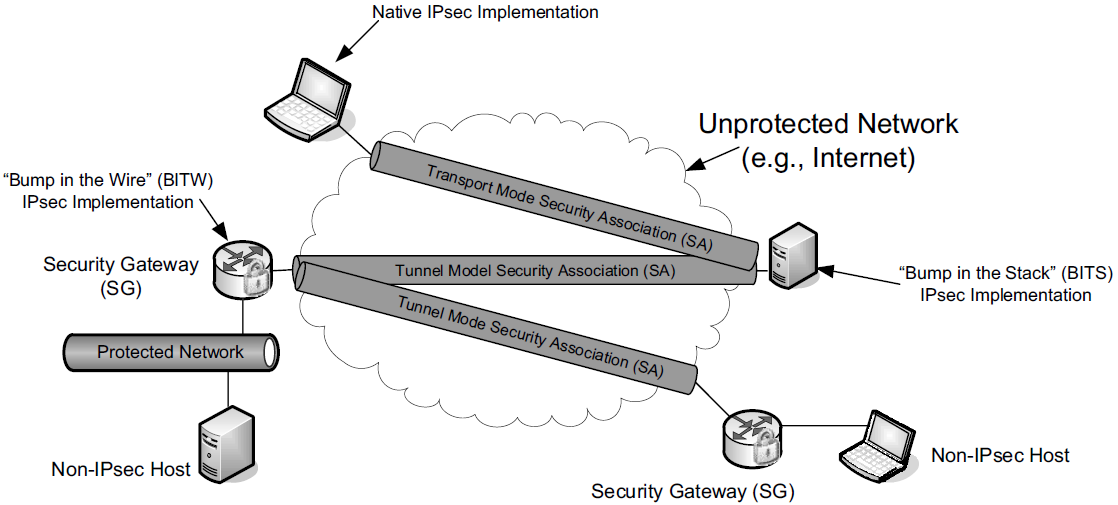

IPsec is an architecture and collection of standards that provide data source authentication, integrity, confidentiality, and access control at the network layer for IPv4 and IPv6 [RFC4301], including Mobile IPv6 [RFC4877]. It also provides a way to exchange cryptographic keys between two communicating parties, a recommended set of cryptographic suites, and a method for signaling the use of compression.

Each communicating party may be an individual host or a security gateway (SG) that provides a boundary between a protected and an unprotected portion of a network.

Thus, IPsec can be used in applications such as remote access to a corporate LAN (forming a VPN), to interconnect different portions of an enterprise securely across the open Internet, or to secure the communications of hosts or routers acting as hosts when exchanging routing information.

A host implementation of IPsec may be integrated within the IP stack itself or may act as a driver sitting “below” the rest of the network stack (called the “Bump in the Stack” or BITS implementation).

Alternatively, it may reside inside an inline SG, which is sometimes called the “Bump in the Wire” or BITW implementation approach. For BITW implementations, both host and SG functionality is generally required, as the device typically needs to be managed remotely.

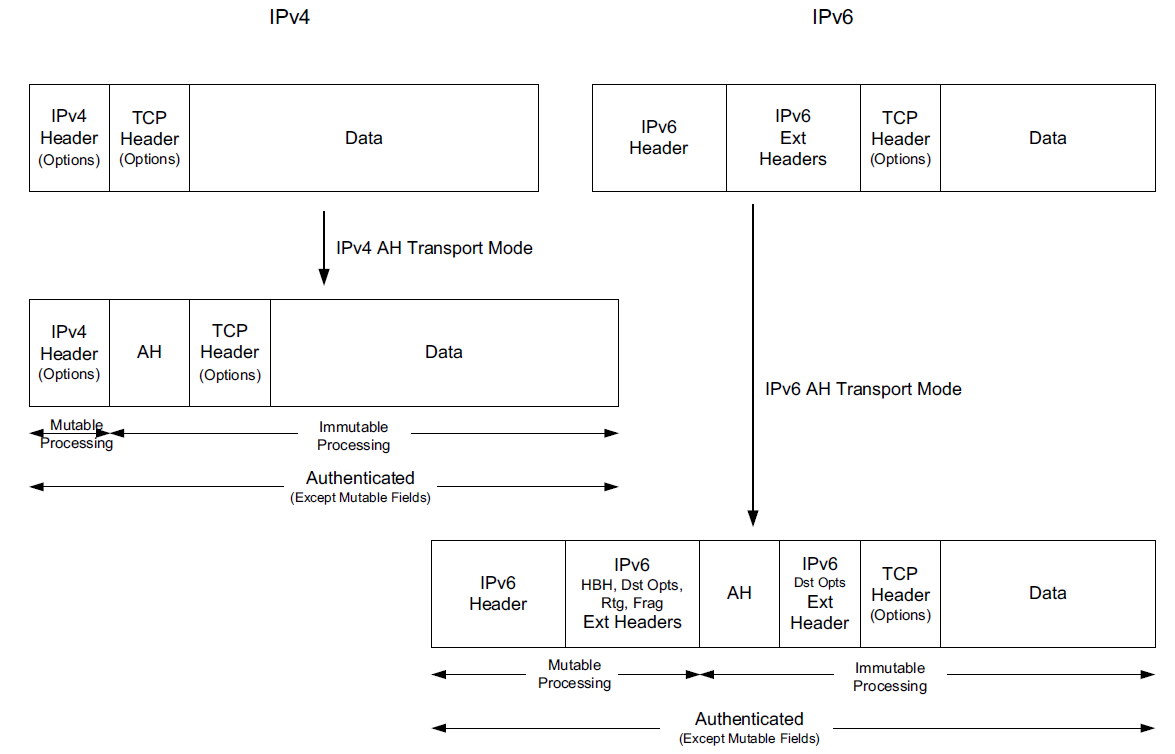

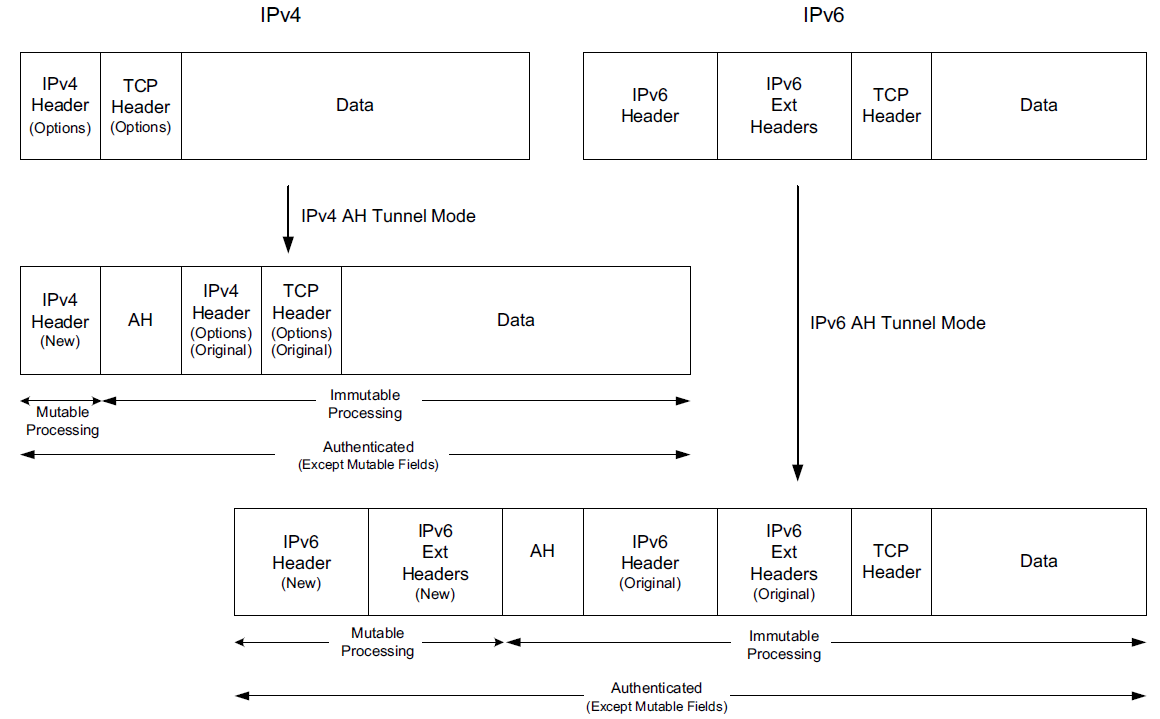

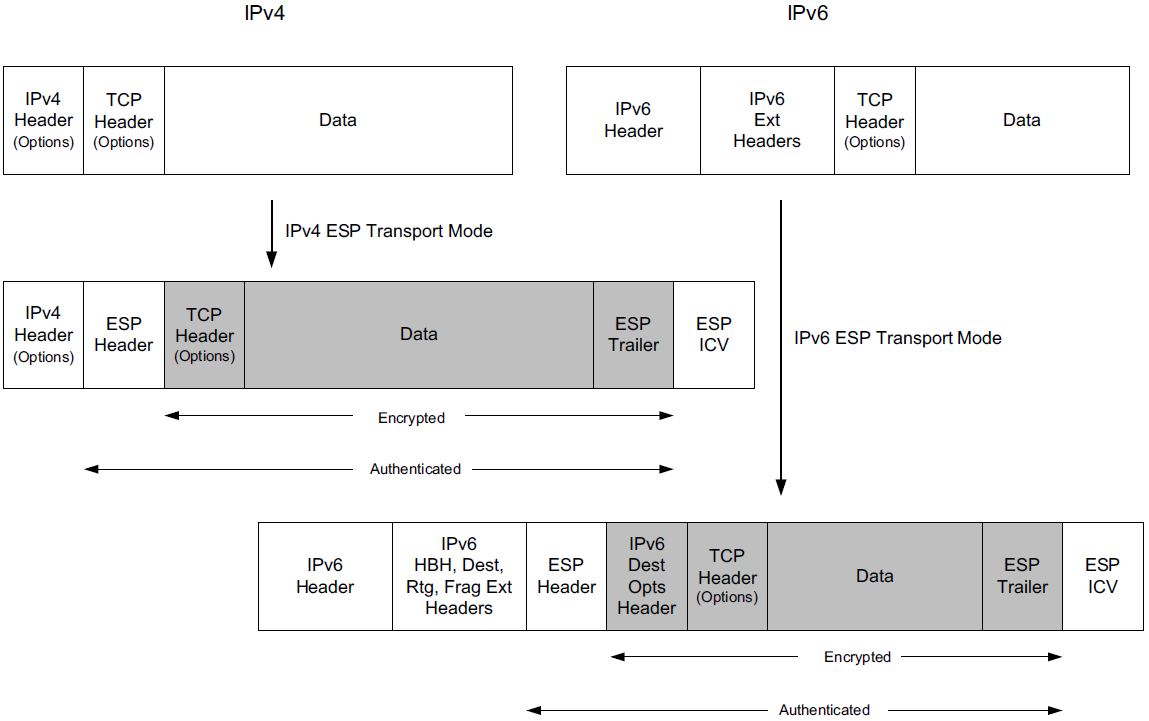

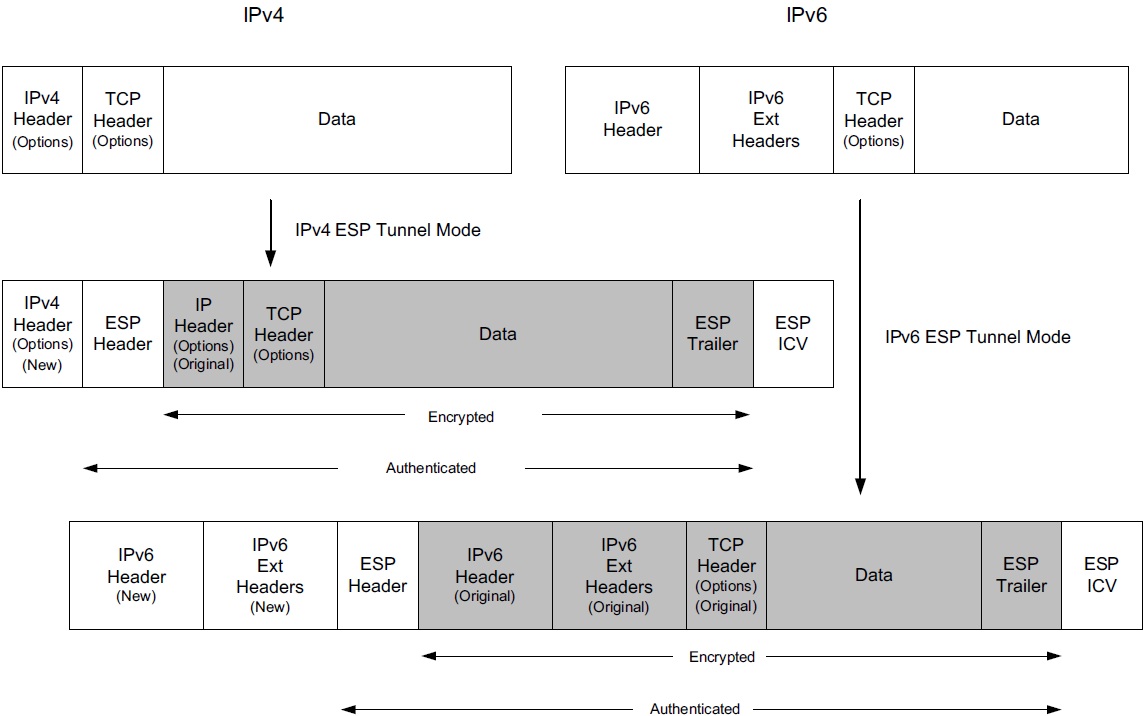

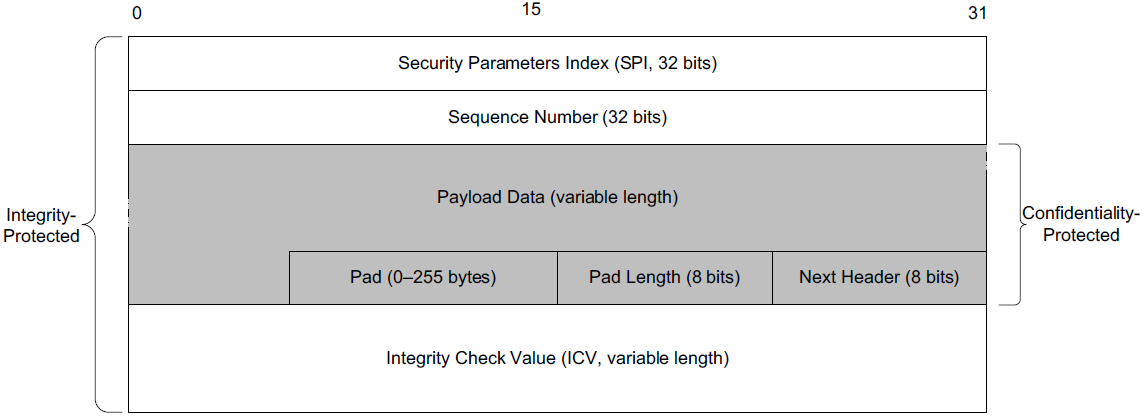

The operation of IPsec can be divided into the establishment phase,

where key material is exchanged and a security association (SA) is built,

followed by the data exchange phase,

where different types of encapsulation schemes, called the Authentication Header (AH) and Encapsulating Security Payload (ESP), may be used in different modes such as tunnel mode or transport mode to protect the flow of IP datagrams.

Each of these IPsec components uses a cryptographic suite, and IPsec is designed to support a wide range of suites.

A complete IPsec implementation includes the SA establishment protocol, AH (optionally), ESP, and a collection of appropriate cryptographic suites, configuration information, and setup tools [RFC6071].

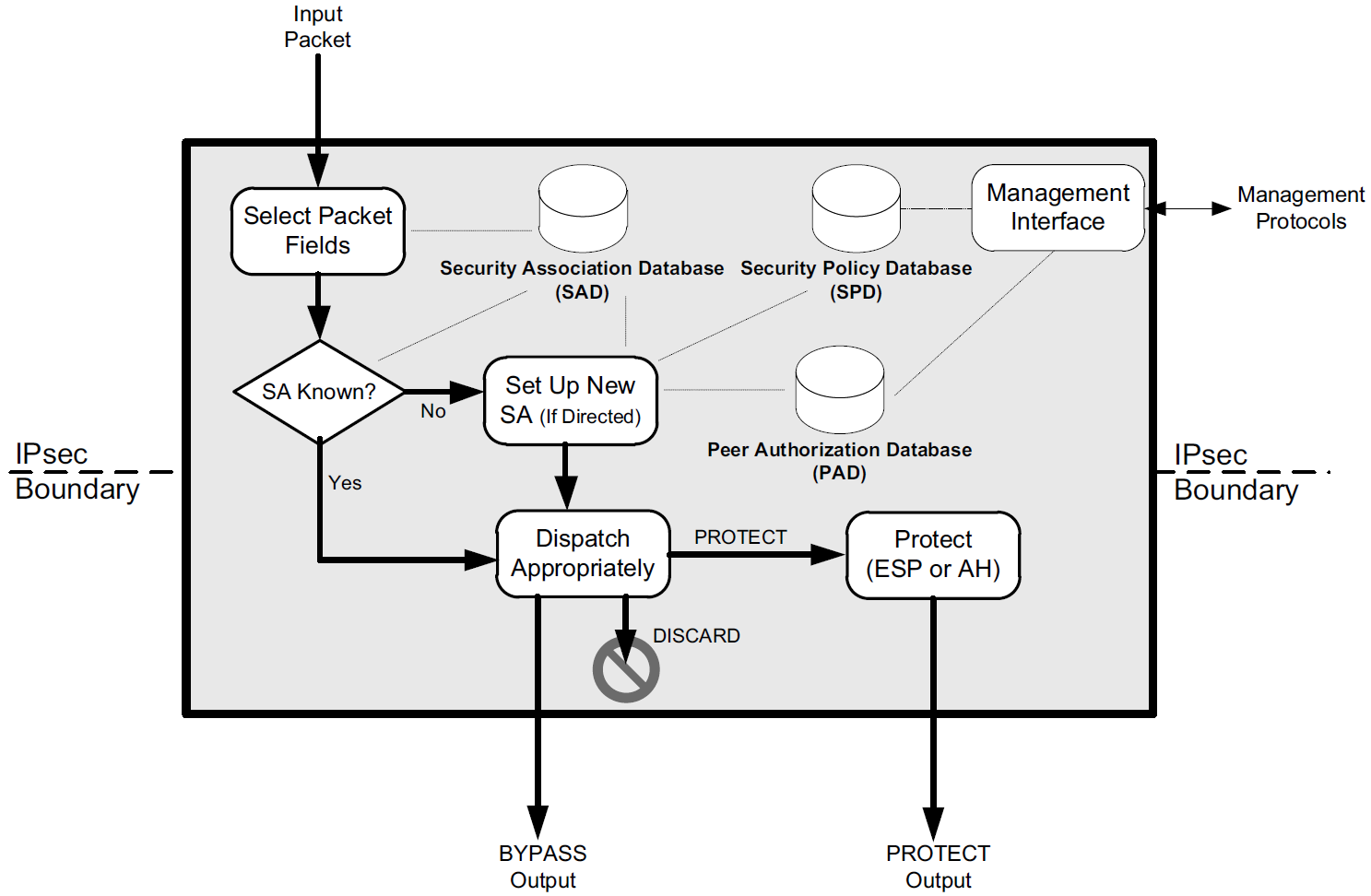

IPsec operates only selectively on certain packets based on policies set by administrators, contained in a security policy database (SPD), logically resident with each IPsec implementation.

IPsec also requires two additional databases called the security association database (SAD) and peer authorization database (PAD), which are consulted when determining how packets are to be handled.

4.2.1. Internet Key Exchange (IKEv2) Protocol

The first step in using IPsec is to establish an SA. An SA is a simplex (one-direction) authenticated association established between two communicating parties, or between a sender and multiple receivers if IPsec is supporting multicast. Most frequently, communication is bidirectional between two parties, so a pair of SAs is required to use IPsec effectively.

A special protocol called the Internet Key Exchange (IKE) is used to accomplish this task automatically. The current version of the protocol is called IKEv2 [RFC5996]. We will refer to it simply as IKE.

To establish an SA, IKE begins with a simple request/response message pair that includes a request to establish the following parameters: an encryption algorithm, an integrity protection algorithm, a Diffie-Hellman group, and a PRF (pseudorandom function family) that gives a random-appearing output given any input bit string. In IKE, a PRF is used for generation of session keys. IKE first establishes an SA for itself (called an IKE_SA) and can subsequently establish SAs for either AH or ESP (called CHILD_SAs). IKE is also capable of negotiating the use of IP Payload Compression (IPComp) [RFC3173] with each CHILD_SA, because applying compression at other layers after performing encryption is ineffective.

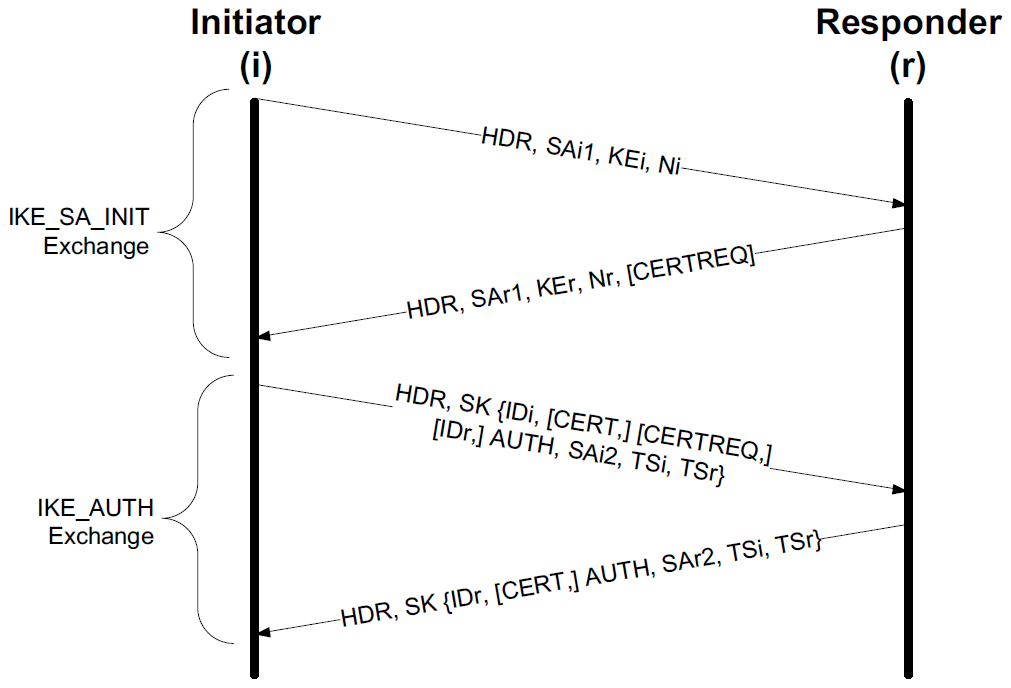

IKE operates using pairs of messages called exchanges that are sent between an initiator and a responder.

-

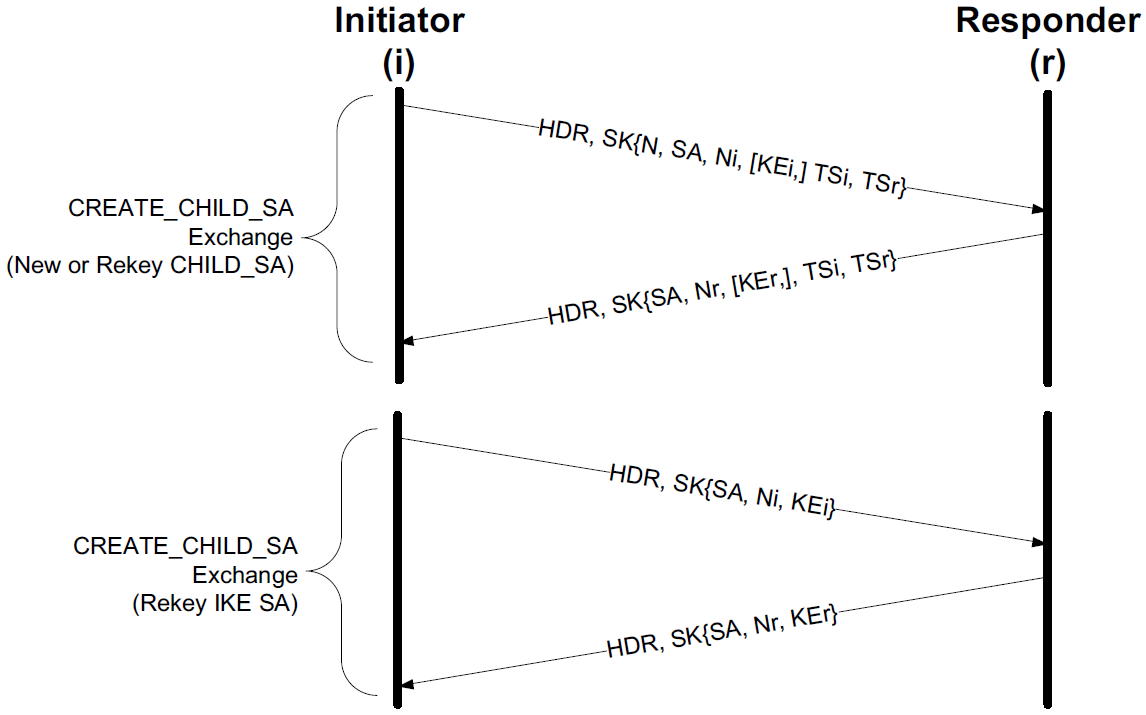

The first two exchanges, called IKE_SA_INIT and IKE_AUTH, establish an IKE_SA and a single CHILD_SA.

-

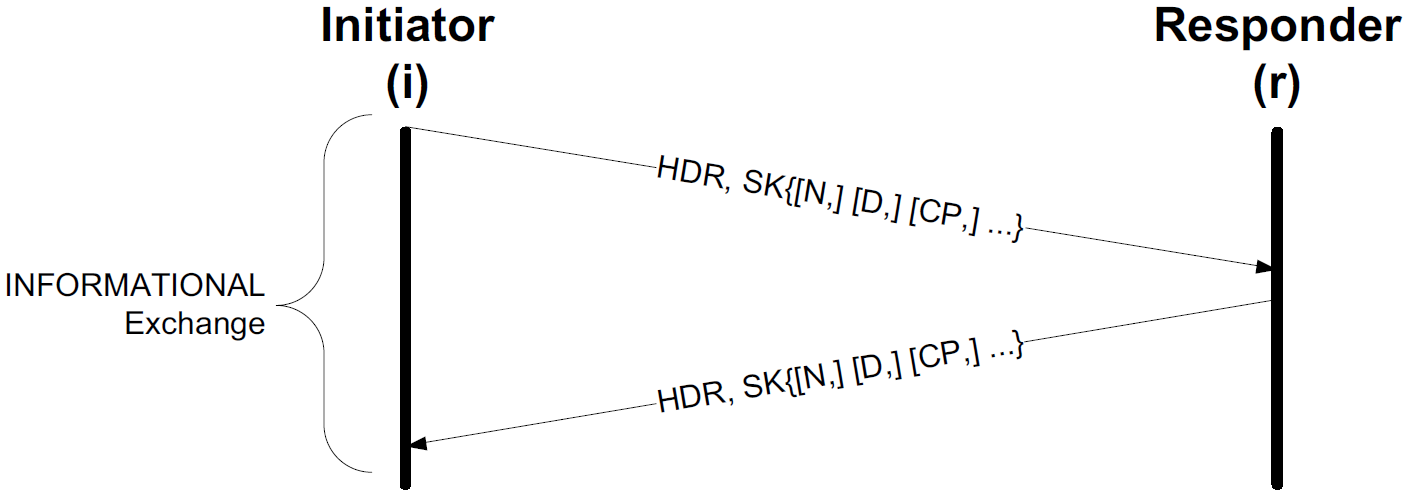

Subsequently, CREATE_CHILD_SA exchanges, used to establish additional CHILD_SAs, and INFORMATIONAL exchanges, used to initiate changes in or gather status information about an SA, may occur.

In most cases, a single IKE_SA_INIT and IKE_AUTH exchange (a total of four messages) is sufficient. Messages used in an exchange contain payloads identified by type numbers that identify the type of information carried in each payload. Multiple payloads per message are common, and some long messages may require IP fragmentation.

IKE messages are sent encapsulated in UDP using port number 500 or 4500. However, because IKE traffic may pass through a NAT where the port number is rewritten, an IKE receiver should be prepared to receive traffic originating from any port. Port 4500 is reserved for UDP-encapsulated ESP and IKE [RFC3948]. IKE messages appearing on port 4500 are required to have their initial 4 data bytes set to 0 (the “non-ESP marker”) to differentiate them from other (i.e., ESP or WESP) messages.

IKE initiators perform timer-based retransmissions when IKE messages appear to have been lost. Responders perform retransmissions only when triggered by an incoming request. An exponentially increasing retransmission timer is used for retransmissions, but the total number of retransmissions is left unspecified. Both initiators and responders keep track of their last transmitted messages and corresponding sequence numbers. Sequence numbers are used to match requests with responses, and to identify message retransmissions. This makes IKE a window-based protocol with a maximum window size given by a responder that is initialized when an SA is first set up but can be increased later. The maximum window size limits the total number of outstanding requests.

4.2.1.1. IKEv2 Message Formats

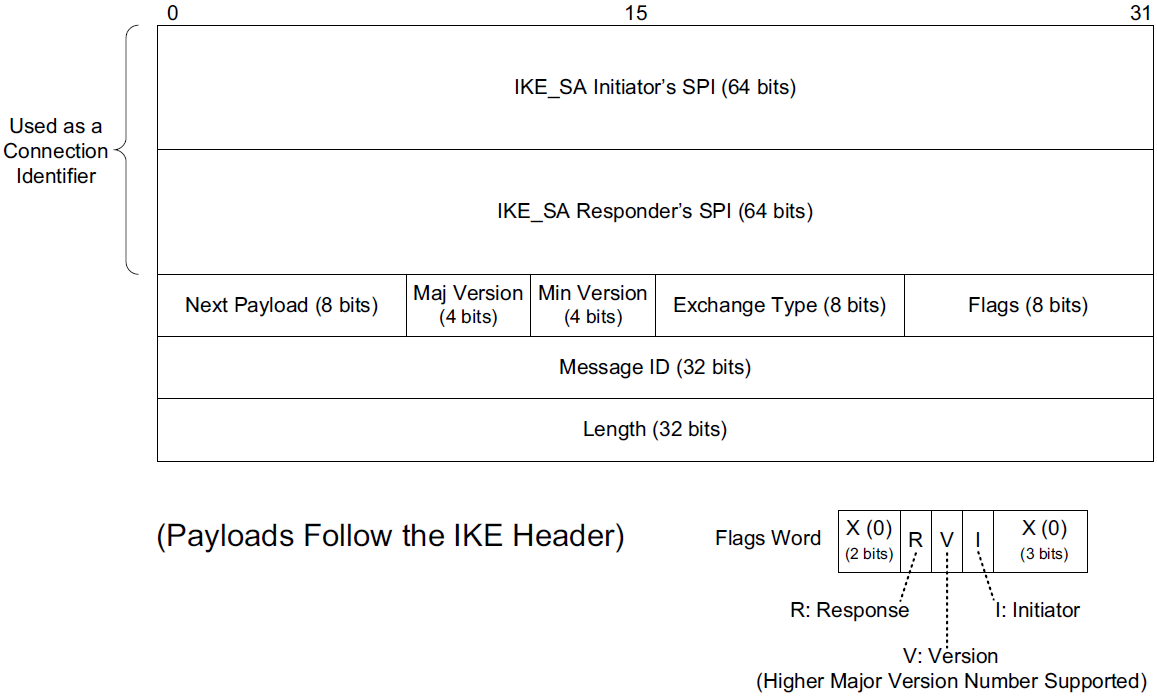

IKE messages contain a header followed by zero or more IKE payloads.

-

In the headers of IKE messages, the Security Parameter Index (SPI) is a 64-bit number that identifies a particular IKE_SA (other IPsec protocols use a 32-bit SPI value).

Both the initiator and the responder have an SA for their peer, so each provides the SPI it is using, and this pair of values, combined with the IP addresses of the endpoints, can be used to form an effective connection identifier.

-

The Majoe Version and Minor Version fields are set to 2 and 0, respectively, for this version of IKE.

The major version number is changed when interoperability cannot be maintained between versions.

-

The Exchange Type field gives the type of exchange of which the message is part: IKE_SA_INIT (34), IKE_AUTH (35), CREATE_CHILD_SA (36), INFORMATIONAL (37), and IKE_SESSION_RESUME (38; see [RFC5723]).

Other values are reserved; the range 240–255 is reserved for private use.

-

Three bit fields are defined for the Flags field (bits are labeled right to left, starting from 0): I (Initiator, bit 3), V (Version, bit 4), and R (Response, bit 5).

The I bit field is set by the original initiator and cleared by the recipient for return messages.

The V bit field indicates that the sender supports a higher major version number of the protocol than is currently being used.

The R bit field indicates that the message is a response to a previous message using the same message ID.

-

The Message ID field in IKE acts somewhat like the Sequence Number field in TCP, except the message ID starts with 0 for the initiator and 1 for the responder.

The field is incremented by 1 for each subsequent transmission, and responses use the same message ID as the requests. The I and R bit fields differentiate requests from responses.

Message IDs are remembered when sent or received. Doing so allows each end to perform replay detection. Old message IDs are not processed. Wrapping of the Message ID field (possible, but not likely with 4 billion IKE messages) is handled by reinitiating the IKE_SA_INIT exchange.

-

The other fields (Next Payload and Length) help describe what the IKE message contains.

Each message contains zero or more payloads, and each payload has its own particular structure. The Length field gives the size (in bytes) of the header plus all payloads in the message. The Next Payload field gives the type of the following payload. At present, 16 nontrivial types are defined (value 0 indicates no next payload).

Table 4. IKEv2 payload types. A value of 0 indicates no next payload. The ranges 1–32 and 49–255 are reserved; the range 128–255 is reserved for private use. Each IKE payload begins with an IKE generic payload header. Value Notation Purpose Value Notation Purpose 33

SA

Security association

41

N

Notify

34

KE

Key exchange

42

D

Delete

35

IDi

Identification (initiator)

43

V

Vendor ID

36

IDr

Identification (responder)

44

TSi

Traffic selector (initiator)

37

CERT

Certificate

45

TSr

Traffic selector (responder)

38

CERTREQ

Certificate request (indicates trust anchors)

46

SK { }

Encrypted and authenticated (contains other payloads)

39

AUTH

Authentication

47

CP

Configuration

40

Ni, Nr

Nonces (initiator, responder)

48

EAP

Extensible authentication (EAP)

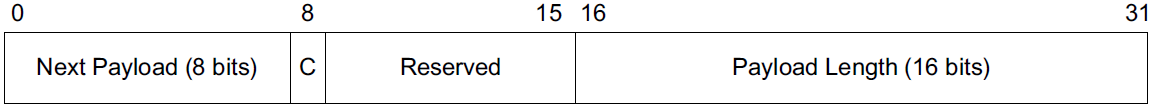

Figure 19. A “generic” IKEv2 payload header. Each payload begins with a header of this form.

Figure 19. A “generic” IKEv2 payload header. Each payload begins with a header of this form.-

The generic payload header is fixed at 32 bits, and the Next Payload and Payload Length fields provide for a “chain” of variable-size payloads (up to 65,535 bytes each, including the 4-byte payload header) to be present in a single IKE message. Each payload type has its own set of special headers.

-

The C (critical) bit field indicates that the current payload (not the one identified by the Next Payload field) is deemed “critical” for a successful IKE exchange.

Receivers of critical payloads that do not understand the type code (provided in the previous payload’s Next Payload field or in the IKE header’s Next Payload field) must abort the IKE exchange.

-

4.2.1.2. The IKE_SA_INIT Exchange

The first of two exchanges, IKE_SA_INIT and IKE_AUTH, constituting the “initial exchanges” of IKE, formerly known as Phase 1 in earlier versions of IKE. Other exchanges (CREATE_CHILD_SA and INFORMATIONAL) may be initiated by either party only after the initial exchanges have completed, and they are always secured (encrypted and integrity-protected) based on the parameters established using the first two exchanges.

As shown, IKE_SA_INIT negotiates the choice of cryptographic suite, exchanges nonces, and performs a DH key agreement. It may also include additional information, depending on the particular implementation and deployment scenario.

-

It begins when the initiator sends an IKE message containing its set of supported cryptographic suites, DH information, and nonce using three payloads (SA, KE, and Ni).

-

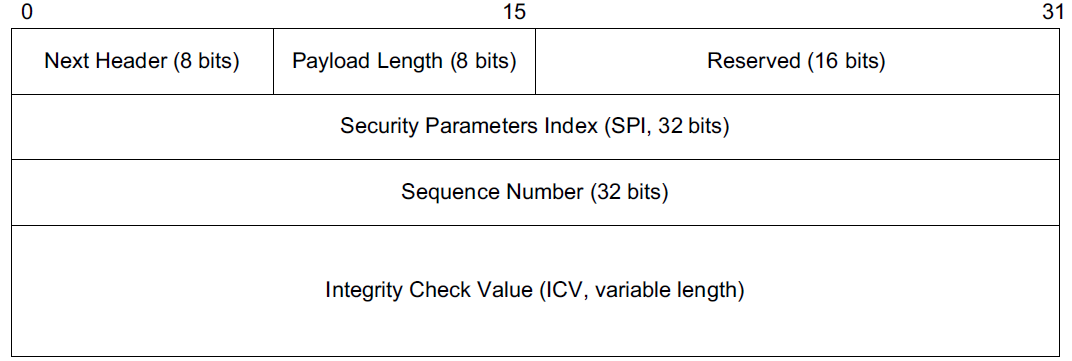

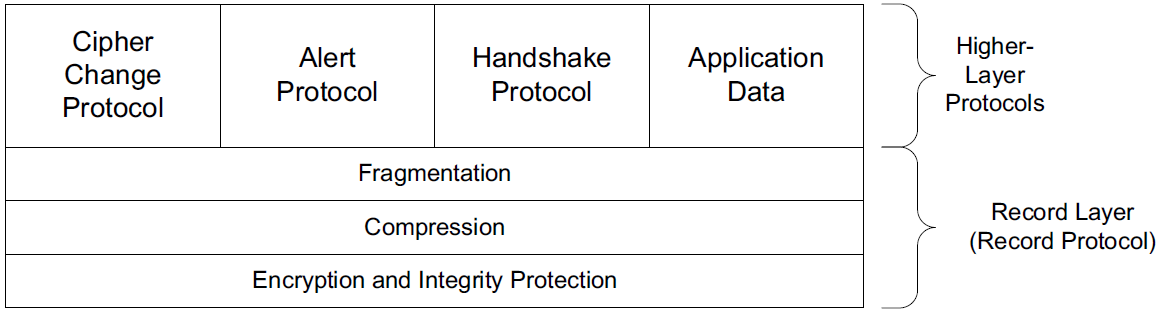

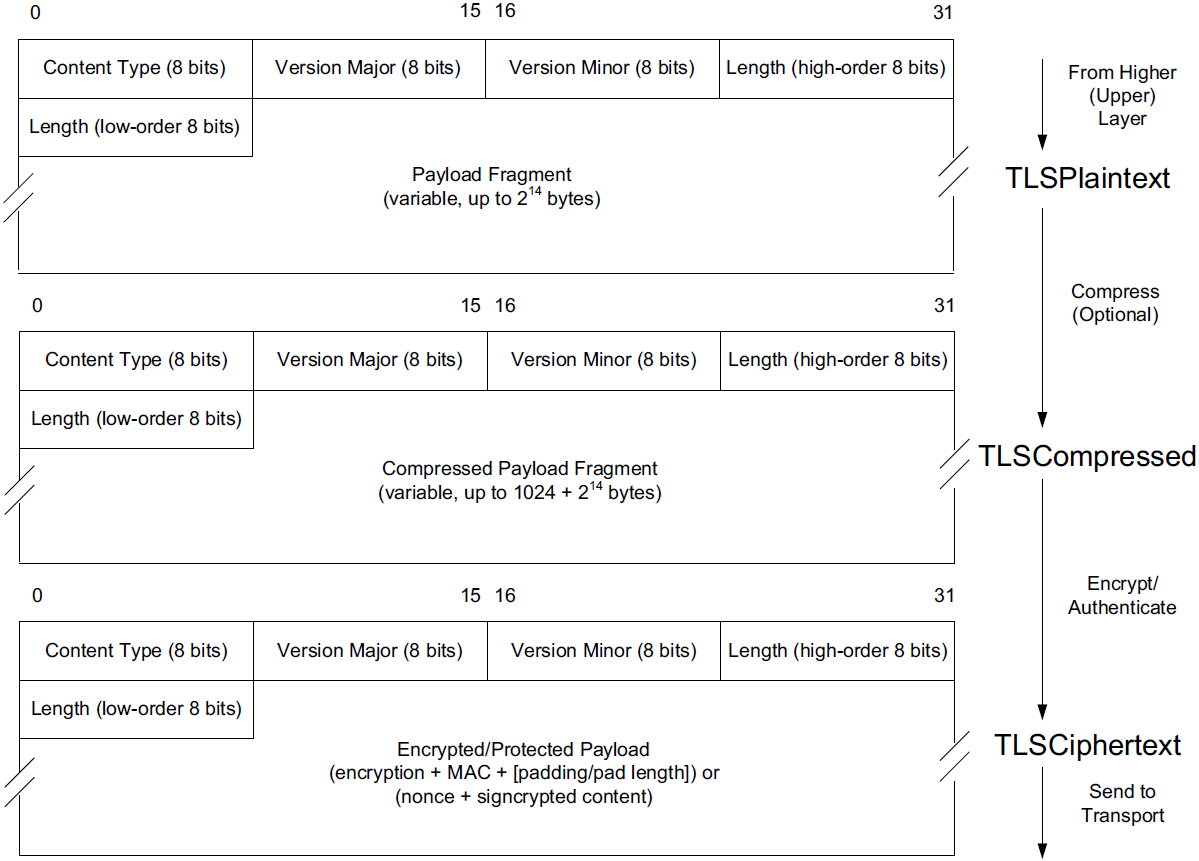

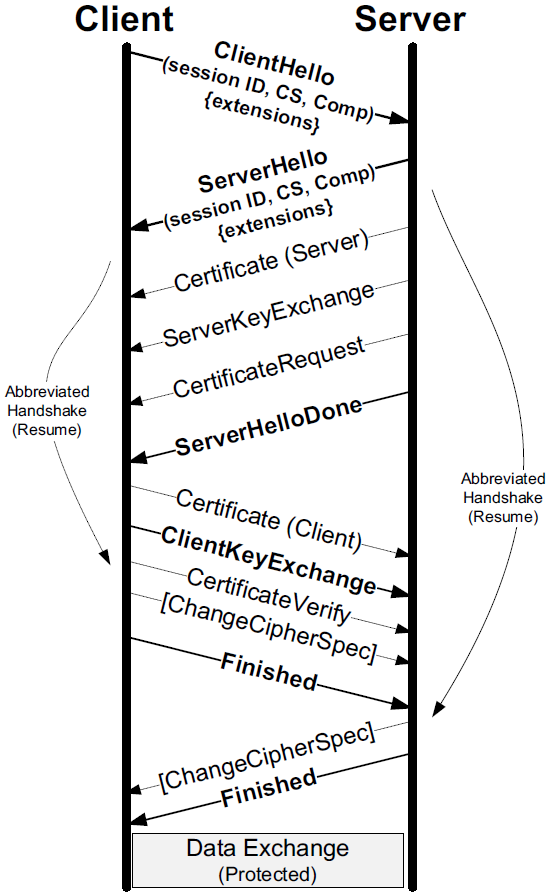

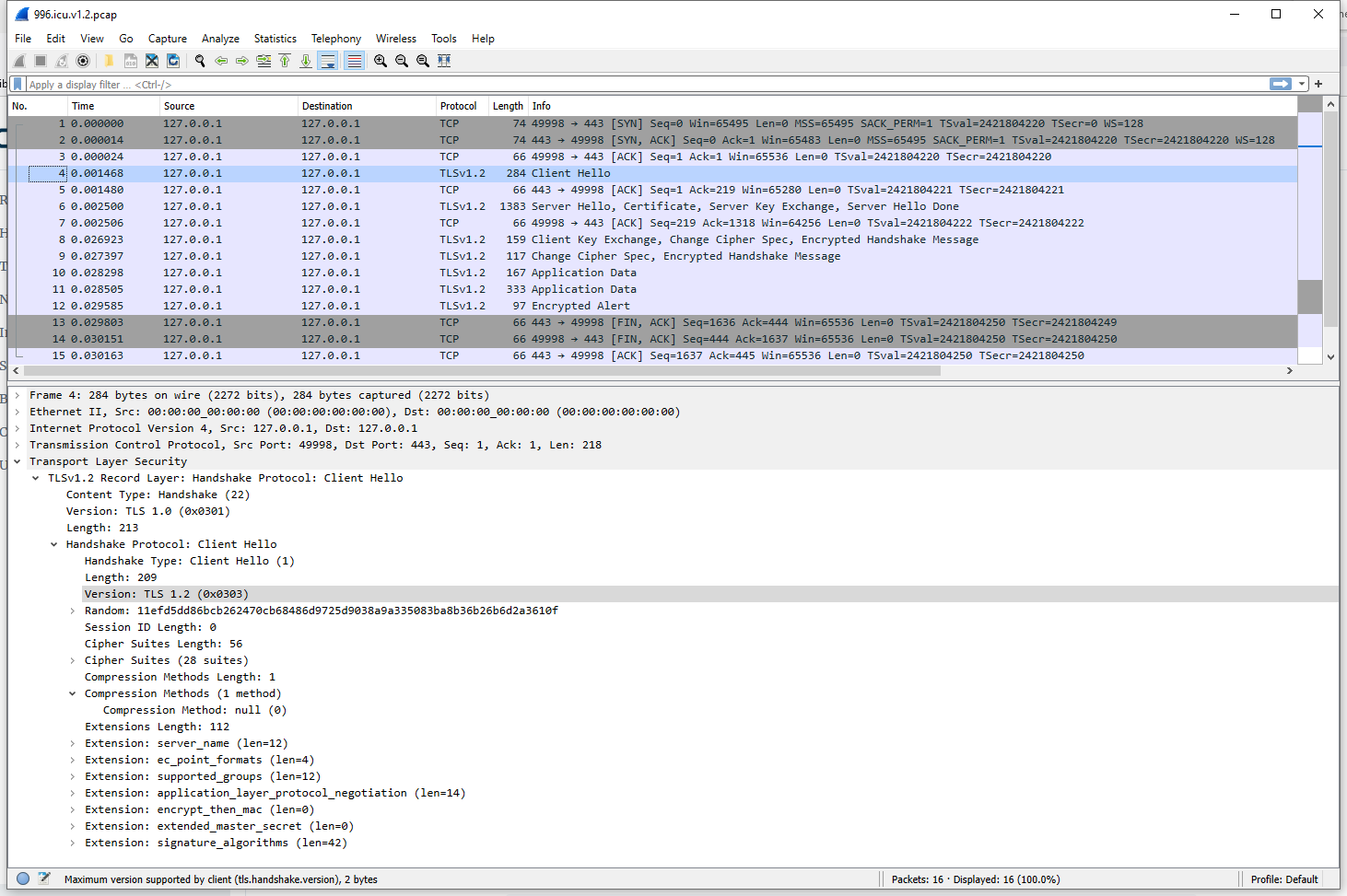

Upon receiving the first message, the responder becomes aware that an IKE transaction is requested by the initiator, the initiator’s supported cryptographic suites, and configuration parameters.