Parallel programming in .NET

"Concurrency is about dealing with lots of things at once. Parallelism is about doing lots of things at once." — Rob Pike

- 1. Threads and threading

- 1.1. Processes and threads

- 1.2. How to use multithreading in .NET

- 1.3. Using threads and threading

- 1.4. Cancellation in Managed Threads

- 1.5. Foreground and background threads

- 1.6. The managed thread pool

- 1.7. Overview of synchronization primitives

- 1.8. Thread-safe collections

- 1.9. Windows Presentation Foundation (WPF): Threading model

- 1.10. The volatile keyword

- 2. Asynchronous programming patterns

- 3. Lazy Initialization

- 4. Parallel programming in .NET

- 5. Asynchronous programming with async and await

- Appendix A: FAQ

- A.1. What happens on Thread.Sleep(0) in .NET?

- A.2. What are the worker and completion port threads?

- A.3. How does .NET identify I/O-bound or compute-bound operations?

- A.4. How does CLR manage the number of threads (worker and I/O threads) in the ThreadPool?

- A.5. What’s the algorithm of the thread pool in .NET?

- A.6. What if Interlocked.Increment a 64-bit integer on a 32-bit hardware?

- A.7. How does .NET make the multiple CPU instructions as an atomic?

- A.8. I heard there are some risk on atomic operations in Go or sth else?

- A.9. What’s ABA problems?

- A.10. How to understand 'hardware, compilers, and the language memory model'?

- A.11. Anyway, for a single operation like Interlocked.Increment, it will always ensure it as an atomic?

- A.12. How to understand the volatile keyword in .NET?

- A.13. What’s the diff of volatile keyword and Volatile class?

- A.14. It seems we should avoid to use the volatile keyword?

- A.15. What’s the diff of asynchronous and parallel programming in .NET?

- A.16. What’s the control meaning in async and await programming?

- A.17. How to understand "Async methods don’t require multithreading because an async method doesn’t run on its own thread."?

- A.18. Can the async/await improve the responsiveness on ASP.NET Core?

- A.19. Is there a SynchronizationContext on ASP.NET Core?

- A.20. What’s the diff of AsOrdered and AsUnordered in PLINQ?

- References

1. Threads and threading

Multithreading allows you to increase the responsiveness of your application and, if your application runs on a multiprocessor or multi-core system, increase its throughput. [1]

1.1. Processes and threads

A process is an executing program. An operating system uses processes to separate the applications that are being executed.

A thread is the basic unit to which an operating system allocates processor time. Each thread has a scheduling priority and maintains a set of structures the system uses to save the thread context when the thread’s execution is paused.

The thread context includes all the information the thread needs to seamlessly resume execution, including the thread’s set of CPU registers and stack. Multiple threads can run in the context of a process. All threads of a process share its virtual address space. A thread can execute any part of the program code, including parts currently being executed by another thread.

| .NET Framework provides a way to isolate applications within a process with the use of application domains. (Application domains are not available on .NET Core.) |

By default, a .NET program is started with a single thread, often called the primary thread. However, it can create additional threads to execute code in parallel or concurrently with the primary thread. These threads are often called worker threads.

1.2. How to use multithreading in .NET

Starting with .NET Framework 4, the recommended way to utilize multithreading is to use Task Parallel Library (TPL) and Parallel LINQ (PLINQ).

Both TPL and PLINQ rely on the ThreadPool threads. The System.Threading.ThreadPool class provides a .NET application with a pool of worker threads. You can also use thread pool threads.

At last, you can use the System.Threading.Thread class that represents a managed thread.

1.3. Using threads and threading

With .NET, you can write applications that perform multiple operations at the same time. Operations with the potential of holding up other operations can execute on separate threads, a process known as multithreading or free threading. [2]

Applications that use multithreading are more responsive to user input because the user interface stays active as processor-intensive tasks execute on separate threads. Multithreading is also useful when you create scalable applications because you can add threads as the workload increases.

1.3.1. Create and start a new thread

You create a new thread by creating a new instance of the System.Threading.Thread class. You provide the name of the method that you want to execute on the new thread to the constructor. To start a created thread, call the Thread.Start method.

new Thread(() => Console.WriteLine("Hello Thread")).Start();1.3.2. Stop a thread

To terminate the execution of a thread, use the System.Threading.CancellationToken. It provides a unified way to stop threads cooperatively.

Sometimes it’s not possible to stop a thread cooperatively because it runs third-party code not designed for cooperative cancellation. In this case, you might want to terminate its execution forcibly. To terminate the execution of a thread forcibly, in .NET Framework you can use the Thread.Abort method. That method raises a ThreadAbortException on the thread on which it’s invoked.

The Thread.Abort method isn’t supported in .NET Core. If you need to terminate the execution of third-party code forcibly in .NET Core, run it in the separate process and use the Process.Kill method.

|

The System.Threading.CancellationToken isn’t available before .NET Framework 4. To stop a thread in older .NET Framework versions, use the thread synchronization techniques to implement the cooperative cancellation manually. For example, you can create the volatile boolean field shouldStop and use it to request the code executed by the thread to stop.

Use the Thread.Join method to make the calling thread wait for the termination of the thread being stopped.

1.3.3. Pause or interrupt a thread

You use the Thread.Sleep method to pause the current thread for a specified amount of time. You can interrupt a blocked thread by calling the Thread.Interrupt method.

Calling the Thread.Sleep method causes the current thread to immediately block for the number of milliseconds or the time interval you pass to the method, and yields the remainder of its time slice to another thread. Once that interval elapses, the sleeping thread resumes execution. [4]

One thread cannot call Thread.Sleep on another thread. Thread.Sleep is a static method that always causes the current thread to sleep.

|

Calling Thread.Sleep with a value of Timeout.Infinite causes a thread to sleep until it is interrupted by another thread that calls the Thread.Interrupt method on the sleeping thread, or until it is terminated by a call to its Thread.Abort method.

You can interrupt a waiting thread by calling the Thread.Interrupt method on the blocked thread to throw a ThreadInterruptedException, which breaks the thread out of the blocking call. The thread should catch the ThreadInterruptedException and do whatever is appropriate to continue working. If the thread ignores the exception, the runtime catches the exception and stops the thread.

|

If the target thread is not blocked when Thread.Interrupt is called, the thread is not interrupted until it blocks. If the thread never blocks, it could complete without ever being interrupted.

|

If a wait is a managed wait, then Thread.Interrupt and Thread.Abort both wake the thread immediately. If a wait is an unmanaged wait (for example, a platform invoke call to the Win32 WaitForSingleObject function), neither Thread.Interrupt nor Thread.Abort can take control of the thread until it returns to or calls into managed code. In managed code, the behavior is as follows:

-

Thread.Interruptwakes a thread out of any wait it might be in and causes aThreadInterruptedExceptionto be thrown in the destination thread. -

.NET Framework only:

Thread.Abortwakes a thread out of any wait it might be in and causes aThreadAbortExceptionto be thrown on the thread.

Thread sleepingThread = new Thread(() =>

{

Console.WriteLine("Thread '{0}' about to sleep indefinitely.", Thread.CurrentThread.Name);

try

{

Thread.Sleep(Timeout.Infinite);

}

catch (ThreadInterruptedException)

{

Console.WriteLine("Thread '{0}' awoken.", Thread.CurrentThread.Name);

}

finally

{

Console.WriteLine("Thread '{0}' executing finally block.", Thread.CurrentThread.Name);

}

Console.WriteLine("Thread '{0} finishing normal execution.", Thread.CurrentThread.Name);

});

sleepingThread.Name = "Sleeping";

sleepingThread.Start();

Thread.Sleep(2000);

sleepingThread.Interrupt();

// Thread 'Sleeping' about to sleep indefinitely.

// Thread 'Sleeping' awoken.

// Thread 'Sleeping' executing finally block.

// Thread 'Sleeping finishing normal execution.1.4. Cancellation in Managed Threads

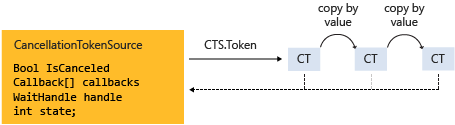

Starting with .NET Framework 4, .NET uses a unified model for cooperative cancellation of asynchronous or long-running synchronous operations. This model is based on a lightweight object called a cancellation token. The object that invokes one or more cancelable operations, for example by creating new threads or tasks, passes the token to each operation. Individual operations can in turn pass copies of the token to other operations. At some later time, the object that created the token can use it to request that the operations stop what they are doing. Only the requesting object can issue the cancellation request, and each listener is responsible for noticing the request and responding to it in an appropriate and timely manner. [3]

The general pattern for implementing the cooperative cancellation model is:

-

Instantiate a

CancellationTokenSourceobject, which manages and sends cancellation notification to the individual cancellation tokens. -

Pass the token returned by the

CancellationTokenSource.Tokenproperty to each task or thread that listens for cancellation. -

Provide a mechanism for each task or thread to respond to cancellation.

-

Call the

CancellationTokenSource.Cancelmethod to provide notification of cancellation.

// Create the token source.

CancellationTokenSource cts = new CancellationTokenSource();

// Pass the token to the cancelable operation.

ThreadPool.QueueUserWorkItem(obj =>

{

if (obj is CancellationToken token)

{

for (int i = 0; i < 100000; i++)

{

if (token.IsCancellationRequested)

{

Console.WriteLine("In iteration {0}, cancellation has been requested...", i + 1);

// Perform cleanup if necessary.

//...

// Terminate the operation.

break;

}

// Simulate some work.

Thread.SpinWait(500000);

}

}

}, cts.Token);

Thread.Sleep(2500);

// Request cancellation.

cts.Cancel();

Console.WriteLine("Cancellation set in token source...");

Thread.Sleep(2500);

// Cancellation should have happened, so call Dispose.

cts.Dispose();

// The example displays output like the following:

// Cancellation set in token source...

// In iteration 1430, cancellation has been requested...

The CancellationTokenSource class implements the IDisposable interface. You should be sure to call the CancellationTokenSource.Dispose method when you have finished using the cancellation token source to free any unmanaged resources it holds.

|

The following illustration shows the relationship between a token source and all the copies of its token.

The cooperative cancellation model makes it easier to create cancellation-aware applications and libraries, and it supports the following features:

-

Cancellation is cooperative and is not forced on the listener. The listener determines how to gracefully terminate in response to a cancellation request.

-

Requesting is distinct from listening. An object that invokes a cancelable operation can control when (if ever) cancellation is requested.

-

The requesting object issues the cancellation request to all copies of the token by using just one method call.

-

A listener can listen to multiple tokens simultaneously by joining them into one linked token.

-

User code can notice and respond to cancellation requests from library code, and library code can notice and respond to cancellation requests from user code.

-

Listeners can be notified of cancellation requests by polling, callback registration, or waiting on wait handles.

In more complex cases, it might be necessary for the user delegate to notify library code that cancellation has occurred. In such cases, the correct way to terminate the operation is for the delegate to call the ThrowIfCancellationRequested, method, which will cause an OperationCanceledException to be thrown. Library code can catch this exception on the user delegate thread and examine the exception’s token to determine whether the exception indicates cooperative cancellation or some other exceptional situation.

The System.Threading.Tasks.Task and System.Threading.Tasks.Task<TResult> classes support cancellation by using cancellation tokens. You can terminate the operation by using one of these options:

-

By returning from the delegate. In many scenarios, this option is sufficient. However, a task instance that’s canceled in this way transitions to the

TaskStatus.RanToCompletionstate, not to theTaskStatus.Canceledstate. -

By throwing an

OperationCanceledExceptionand passing it the token on which cancellation was requested. The preferred way to perform is to use theThrowIfCancellationRequestedmethod. A task that’s canceled in this way transitions to theCanceledstate, which the calling code can use to verify that the task responded to its cancellation request.

When a task instance observes an OperationCanceledException thrown by the user code, it compares the exception’s token to its associated token (the one that was passed to the API that created the Task). If the tokens are same and the token’s IsCancellationRequested property returns true, the task interprets this as acknowledging cancellation and transitions to the Canceled state. If you don’t use a Wait or WaitAll method to wait for the task, then the task just sets its status to Canceled.

If you’re waiting on a Task that transitions to the Canceled state, a System.Threading.Tasks.TaskCanceledException exception (wrapped in an AggregateException exception) is thrown. This exception indicates successful cancellation instead of a faulty situation. Therefore, the task’s Exception property returns null.

public class TaskCanceledException : OperationCanceledExceptionIf the token’s IsCancellationRequested property returns false or if the exception’s token doesn’t match the Task’s token, the OperationCanceledException is treated like a normal exception, causing the Task to transition to the Faulted state. The presence of other exceptions will also cause the Task to transition to the Faulted state. You can get the status of the completed task in the Status property.

It’s possible that a task might continue to process some items after cancellation is requested.

|

Please note that if you use |

1.5. Foreground and background threads

A managed thread is either a background thread or a foreground thread. Background threads are identical to foreground threads with one exception: a background thread does not keep the managed execution environment running. Once all foreground threads have been stopped in a managed process (where the .exe file is a managed assembly), the system stops all background threads and shuts down.

Use the Thread.IsBackground property to determine whether a thread is a background or a foreground thread, or to change its status. A thread can be changed to a background thread at any time by setting its IsBackground property to true.

Threads that belong to the managed thread pool (that is, threads whose IsThreadPoolThread property is true) are background threads. All threads that enter the managed execution environment from unmanaged code are marked as background threads. All threads generated by creating and starting a new Thread object are by default foreground threads.

If you use a thread to monitor an activity, such as a socket connection, set its IsBackground property to true so that the thread does not prevent your process from terminating.

* In .NET, even though you can technically change the The In the code you provided, you’re attempting to change the Always remember that |

1.6. The managed thread pool

The System.Threading.ThreadPool class provides your application with a pool of worker threads that are managed by the system, allowing you to concentrate on application tasks rather than thread management. If you have short tasks that require background processing, the managed thread pool is an easy way to take advantage of multiple threads. Use of the thread pool is significantly easier in Framework 4 and later, since you can create Task and Task<TResult> objects that perform asynchronous tasks on thread pool threads. [5]

1.6.1. Thread pool characteristics

Thread pool threads are background threads. Each thread uses the default stack size, runs at the default priority, and is in the multithreaded apartment. Once a thread in the thread pool completes its task, it’s returned to a queue of waiting threads. From this moment it can be reused. This reuse enables applications to avoid the cost of creating a new thread for each task.

| There is only one thread pool per process. |

1.6.2. Exceptions in thread pool threads

Unhandled exceptions in thread pool threads terminate the process. There are three exceptions to this rule:

-

A

System.Threading.ThreadAbortExceptionis thrown in a thread pool thread becauseThread.Abortwas called. -

A

System.AppDomainUnloadedExceptionis thrown in a thread pool thread because the application domain is being unloaded. -

The common language runtime or a host process terminates the thread.

1.6.3. Maximum number of thread pool threads

The number of operations that can be queued to the thread pool is limited only by available memory. However, the thread pool limits the number of threads that can be active in the process simultaneously. If all thread pool threads are busy, additional work items are queued until threads to execute them become available. The default size of the thread pool for a process depends on several factors, such as the size of the virtual address space. A process can call the ThreadPool.GetMaxThreads method to determine the number of threads.

You can control the maximum number of threads by using the ThreadPool.GetMaxThreads and ThreadPool.SetMaxThreads methods.

1.6.4. Thread pool minimums

The thread pool provides new worker threads or I/O completion threads on demand until it reaches a specified minimum for each category. You can use the ThreadPool.GetMinThreads method to obtain these minimum values.

| When demand is low, the actual number of thread pool threads can fall below the minimum values. |

When a minimum is reached, the thread pool can create additional threads or wait until some tasks complete. The thread pool creates and destroys worker threads in order to optimize throughput, which is defined as the number of tasks that complete per unit of time. Too few threads might not make optimal use of available resources, whereas too many threads could increase resource contention.

|

You can use the |

1.6.5. When not to use thread pool threads

There are several scenarios in which it’s appropriate to create and manage your own threads instead of using thread pool threads:

-

You require a foreground thread.

-

You require a thread to have a particular priority.

-

You have tasks that cause the thread to block for long periods of time. The thread pool has a maximum number of threads, so a large number of blocked thread pool threads might prevent tasks from starting.

-

You need to place threads into a single-threaded apartment. All ThreadPool threads are in the multithreaded apartment.

-

You need to have a stable identity associated with the thread, or to dedicate a thread to a task.

1.7. Overview of synchronization primitives

.NET provides a range of types that you can use to synchronize access to a shared resource or coordinate thread interaction. [6]

1.7.1. WaitHandle class and lightweight synchronization types

Multiple .NET synchronization primitives derive from the System.Threading.WaitHandle class, which encapsulates a native operating system synchronization handle and uses a signaling mechanism for thread interaction. Those classes include:

-

System.Threading.Mutex, which grants exclusive access to a shared resource. The state of a mutex is signaled if no thread owns it. -

System.Threading.Semaphore, which limits the number of threads that can access a shared resource or a pool of resources concurrently. The state of a semaphore is set to signaled when its count is greater than zero, and nonsignaled when its count is zero. -

System.Threading.EventWaitHandle, which represents a thread synchronization event and can be either in a signaled or unsignaled state. -

System.Threading.AutoResetEvent, which derives fromEventWaitHandleand, when signaled, resets automatically to an unsignaled state after releasing a single waiting thread. -

System.Threading.ManualResetEvent, which derives fromEventWaitHandleand, when signaled, stays in a signaled state until theResetmethod is called.

In .NET Framework, because WaitHandle derives from System.MarshalByRefObject, these types can be used to synchronize the activities of threads across application domain boundaries.

In .NET Framework, .NET Core, and .NET 5+, some of these types can represent named system synchronization handles, which are visible throughout the operating system and can be used for the inter-process synchronization:

-

Mutex

-

Semaphore (on Windows)

-

EventWaitHandle (on Windows)

Lightweight synchronization types don’t rely on underlying operating system handles and typically provide better performance. However, they cannot be used for the inter-process synchronization. Use those types for thread synchronization within one application.

Some of those types are alternatives to the types derived from WaitHandle. For example, SemaphoreSlim is a lightweight alternative to Semaphore.

public class SemaphoreSlim : IDisposable

public sealed class Semaphore : System.Threading.WaitHandle1.7.2. Synchronization of access to a shared resource

.NET provides a range of synchronization primitives to control access to a shared resource by multiple threads.

1.7.2.1. Monitor class

The System.Threading.Monitor class grants mutually exclusive access to a shared resource by acquiring or releasing a lock on the object that identifies the resource. While a lock is held, the thread that holds the lock can again acquire and release the lock. Any other thread is blocked from acquiring the lock and the Monitor.Enter method waits until the lock is released. The Enter method acquires a released lock. You can also use the Monitor.TryEnter method to specify the amount of time during which a thread attempts to acquire a lock. Because the Monitor class has thread affinity, the thread that acquired a lock must release the lock by calling the Monitor.Exit method.

You can coordinate the interaction of threads that acquire a lock on the same object by using the Monitor.Wait, Monitor.Pulse, and Monitor.PulseAll methods.

|

Use the |

var ch = new BlockingChannel<object>();

ThreadPool.QueueUserWorkItem(_ =>

{

for (int i = 0; i < 10; i++)

{

ch.Add(i);

}

ch.Add(null!);

});

foreach (var v in ch)

{

Console.Write($"{v} ");

}

class BlockingChannel<T> : IEnumerable<T> where T : class, new()

{

private readonly object lockObj = new();

private bool _isEmpty = true;

private T? _val;

public void Add(T value)

{

Monitor.Enter(lockObj);

try

{

while (!_isEmpty)

{

Monitor.Wait(lockObj);

}

_isEmpty = false;

_val = value;

Monitor.Pulse(lockObj);

}

finally

{

Monitor.Exit(lockObj);

}

}

public T? Get()

{

Monitor.Enter(lockObj);

try

{

while (_isEmpty)

{

Monitor.Wait(lockObj);

}

_isEmpty = true;

Monitor.Pulse(lockObj);

return _val;

}

finally

{

Monitor.Exit(lockObj);

}

}

public IEnumerator<T> GetEnumerator()

{

while (true)

{

T? val = Get();

if (val == null) break;

yield return val;

}

}

System.Collections.IEnumerator System.Collections.IEnumerable.GetEnumerator()

{

return GetEnumerator();

}

}

// $ dotnet run

// 0 1 2 3 4 5 6 7 8 91.7.2.2. Mutex class

The System.Threading.Mutex class, like Monitor, grants exclusive access to a shared resource. Use one of the Mutex.WaitOne method overloads to request the ownership of a mutex. Like Monitor, Mutex has thread affinity and the thread that acquired a mutex must release it by calling the Mutex.ReleaseMutex method.

Unlike Monitor, the Mutex class can be used for inter-process synchronization. To do that, use a named mutex, which is visible throughout the operating system. To create a named mutex instance, use a Mutex constructor that specifies a name. You can also call the Mutex.OpenExisting method to open an existing named system mutex.

1.7.2.3. SpinLock structure

The System.Threading.SpinLock structure, like Monitor, grants exclusive access to a shared resource based on the availability of a lock. When SpinLock attempts to acquire a lock that is unavailable, it waits in a loop, repeatedly checking until the lock becomes available.

SpinLock sl = new SpinLock();

StringBuilder sb = new StringBuilder();

// Action taken by each parallel job.

// Append to the StringBuilder 10000 times, protecting

// access to sb with a SpinLock.

Action action = () =>

{

bool gotLock = false;

for (int i = 0; i < 10000; i++)

{

gotLock = false;

try

{

sl.Enter(ref gotLock);

sb.Append(i % 10);

}

finally

{

// Only give up the lock if you actually acquired it

if (gotLock) { sl.Exit(); }

}

}

};

// Invoke 3 concurrent instances of the action above

Parallel.Invoke(action, action, action);

// Check/Show the results

Console.WriteLine("sb.Length = {0} (should be 30000)", sb.Length);

Console.WriteLine("number of occurrences of '5' in sb: {0} (should be 3000)",

sb.ToString().Where(c => (c == '5')).Count());1.7.2.4. ReaderWriterLockSlim class

The System.Threading.ReaderWriterLockSlim class grants exclusive access to a shared resource for writing and allows multiple threads to access the resource simultaneously for reading. You might want to use ReaderWriterLockSlim to synchronize access to a shared data structure that supports thread-safe read operations, but requires exclusive access to perform write operation. When a thread requests exclusive access (for example, by calling the ReaderWriterLockSlim.EnterWriteLock method), subsequent reader and writer requests block until all existing readers have exited the lock, and the writer has entered and exited the lock.

class SynchronizedDictionary<TKey, TValue> : IDisposable where TKey : notnull

{

private readonly Dictionary<TKey, TValue> _dictionary = new Dictionary<TKey, TValue>();

private readonly ReaderWriterLockSlim _lock = new ReaderWriterLockSlim();

public void Add(TKey key, TValue value)

{

_lock.EnterWriteLock();

try

{

_dictionary.Add(key, value);

}

finally { _lock.ExitWriteLock(); }

}

public void TryAddValue(TKey key, TValue value)

{

_lock.EnterUpgradeableReadLock();

try

{

if (_dictionary.TryGetValue(key, out var res) && res != null && res.Equals(value)) return;

_lock.EnterWriteLock();

try

{

_dictionary[key] = value;

}

finally { _lock.ExitWriteLock(); }

}

finally { _lock.ExitUpgradeableReadLock(); }

}

public bool TryGetValue(TKey key, [MaybeNullWhen(false)] out TValue value)

{

_lock.EnterReadLock();

try

{

return _dictionary.TryGetValue(key, out value);

}

finally { _lock.ExitReadLock(); }

}

private bool _disposed;

protected virtual void Dispose(bool disposing)

{

if (!_disposed)

{

if (disposing)

{

// perform managed resource cleanup here

_lock.Dispose();

}

// perform unmanaged resource cleanup here

_disposed = true;

}

}

~SynchronizedDictionary() => Dispose(disposing: false);

public void Dispose()

{

Dispose(disposing: true);

GC.SuppressFinalize(this);

}

}1.7.2.5. Semaphore and SemaphoreSlim classes

The System.Threading.Semaphore and System.Threading.SemaphoreSlim classes limit the number of threads that can access a shared resource or a pool of resources concurrently. Additional threads that request the resource wait until any thread releases the semaphore. Because the semaphore doesn’t have thread affinity, a thread can acquire the semaphore and another one can release it.

SemaphoreSlim is a lightweight alternative to Semaphore and can be used only for synchronization within a single process boundary.

On Windows, you can use Semaphore for the inter-process synchronization. To do that, create a Semaphore instance that represents a named system semaphore by using one of the Semaphore constructors that specifies a name or the Semaphore.OpenExisting method. SemaphoreSlim doesn’t support named system semaphores.

1.7.3. Thread interaction, or signaling

Thread interaction (or thread signaling) means that a thread must wait for notification, or a signal, from one or more threads in order to proceed. For example, if thread A calls the Thread.Join method of thread B, thread A is blocked until thread B completes. The synchronization primitives described in the preceding section provide a different mechanism for signaling: by releasing a lock, a thread notifies another thread that it can proceed by acquiring the lock.

1.7.3.1. EventWaitHandle, AutoResetEvent, ManualResetEvent, and ManualResetEventSlim classes

The System.Threading.EventWaitHandle class represents a thread synchronization event.

A synchronization event can be either in an unsignaled or signaled state. When the state of an event is unsignaled, a thread that calls the event’s WaitOne overload is blocked until an event is signaled. The EventWaitHandle.Set method sets the state of an event to signaled.

The behavior of an EventWaitHandle that has been signaled depends on its reset mode:

-

An EventWaitHandle created with the

EventResetMode.AutoResetflag resets automatically after releasing a single waiting thread. It’s like a turnstile that allows only one thread through each time it’s signaled. The System.Threading.AutoResetEvent class, which derives from EventWaitHandle, represents that behavior. -

An EventWaitHandle created with the

EventResetMode.ManualResetflag remains signaled until itsResetmethod is called. It’s like a gate that is closed until signaled and then stays open until someone closes it. The System.Threading.ManualResetEvent class, which derives from EventWaitHandle, represents that behavior. The System.Threading.ManualResetEventSlim class is a lightweight alternative to ManualResetEvent.

On Windows, you can use EventWaitHandle for the inter-process synchronization. To do that, create an EventWaitHandle instance that represents a named system synchronization event by using one of the EventWaitHandle constructors that specifies a name or the EventWaitHandle.OpenExisting method.

| Event wait handles are not .NET events. There are no delegates or event handlers involved. The word "event" is used to describe them because they have traditionally been referred to as operating-system events, and because the act of signaling the wait handle indicates to waiting threads that an event has occurred. |

-

Event Wait Handles That Reset Automatically [7]

You create an automatic reset event by specifying

EventResetMode.AutoResetwhen you create theEventWaitHandleobject. As its name implies, this synchronization event resets automatically when signaled, after releasing a single waiting thread. Signal the event by calling itsSetmethod.Automatic reset events are usually used to provide exclusive access to a resource for a single thread at a time. A thread requests the resource by calling the

WaitOnemethod. If no other thread is holding the wait handle, the method returns true and the calling thread has control of the resource.If an automatic reset event is signaled when no threads are waiting, it remains signaled until a thread attempts to wait on it. The event releases the thread and immediately resets, blocking subsequent threads.

-

Event Wait Handles That Reset Manually [7]

You create a manual reset event by specifying

EventResetMode.ManualResetwhen you create theEventWaitHandleobject. As its name implies, this synchronization event must be reset manually after it has been signaled. Until it is reset, by calling itsResetmethod, threads that wait on the event handle proceed immediately without blocking.A manual reset event acts like the gate of a corral. When the event is not signaled, threads that wait on it block, like horses in a corral. When the event is signaled, by calling its

Setmethod, all waiting threads are free to proceed. The event remains signaled until itsResetmethod is called. This makes the manual reset event an ideal way to hold up threads that need to wait until one thread finishes a task.Like horses leaving a corral, it takes time for the released threads to be scheduled by the operating system and to resume execution. If the

Resetmethod is called before all the threads have resumed execution, the remaining threads once again block. Which threads resume and which threads block depends on random factors like the load on the system, the number of threads waiting for the scheduler, and so on. This is not a problem if the thread that signals the event ends after signaling, which is the most common usage pattern. If you want the thread that signaled the event to begin a new task after all the waiting threads have resumed, you must block it until all the waiting threads have resumed. Otherwise, you have a race condition, and the behavior of your code is unpredictable.EventWaitHandle ewh = new EventWaitHandle(false, EventResetMode.ManualReset); ThreadPool.QueueUserWorkItem(_ => { ewh.WaitOne(); Console.WriteLine("FooSingled"); }); ThreadPool.QueueUserWorkItem(_ => { ewh.WaitOne(); Console.WriteLine("BarSingled"); }); ewh.Set(); Thread.Sleep(1000); // $ dotnet run // BarSingled // FooSingled

1.7.3.2. CountdownEvent class

The System.Threading.CountdownEvent class represents an event that becomes set when its count is zero. While CountdownEvent.CurrentCount is greater than zero, a thread that calls CountdownEvent.Wait is blocked. Call CountdownEvent.Signal to decrement an event’s count.

In contrast to ManualResetEvent or ManualResetEventSlim, which you can use to unblock multiple threads with a signal from one thread, you can use CountdownEvent to unblock one or more threads with signals from multiple threads.

1.7.3.3. Barrier class

The System.Threading.Barrier class represents a thread execution barrier. A thread that calls the Barrier.SignalAndWait method signals that it reached the barrier and waits until other participant threads reach the barrier. When all participant threads reach the barrier, they proceed and the barrier is reset and can be used again.

You might use Barrier when one or more threads require the results of other threads before proceeding to the next computation phase.

1.7.4. Interlocked class

The System.Threading.Interlocked class provides static methods that perform simple atomic operations on a variable. Those atomic operations include addition, increment and decrement, exchange and conditional exchange that depends on a comparison, and read operation of a 64-bit integer value.

1.7.5. SpinWait structure

The System.Threading.SpinWait structure provides support for spin-based waiting. You might want to use it when a thread has to wait for an event to be signaled or a condition to be met, but when the actual wait time is expected to be less than the waiting time required by using a wait handle or by otherwise blocking the thread. By using SpinWait, you can specify a short period of time to spin while waiting, and then yield (for example, by waiting or sleeping) only if the condition was not met in the specified time.

1.8. Thread-safe collections

The System.Collections.Concurrent namespace includes several collection classes that are both thread-safe and scalable. Multiple threads can safely and efficiently add or remove items from these collections, without requiring additional synchronization in user code. When you write new code, use the concurrent collection classes to write multiple threads to the collection concurrently. If you’re only reading from a shared collection, then you can use the classes in the System.Collections.Generic namespace.

1.8.1. Fine-grained locking and lock-free mechanisms

Some of the concurrent collection types use lightweight synchronization mechanisms such as SpinLock, SpinWait, SemaphoreSlim, and CountdownEvent. These synchronization types typically use busy spinning for brief periods before they put the thread into a true Wait state. When wait times are expected to be short, spinning is far less computationally expensive than waiting, which involves an expensive kernel transition. For collection classes that use spinning, this efficiency means that multiple threads can add and remove items at a high rate.

The ConcurrentQueue<T> and ConcurrentStack<T> classes don’t use locks at all. Instead, they rely on Interlocked operations to achieve thread safety.

The following table lists the collection types in the System.Collections.Concurrent namespace:

| Type | Description |

|---|---|

|

Provides bounding and blocking functionality for any type that implements |

|

Thread-safe implementation of a dictionary of key-value pairs. |

|

Thread-safe implementation of a FIFO (first-in, first-out) queue. |

|

Thread-safe implementation of a LIFO (last-in, first-out) stack. |

|

Thread-safe implementation of an unordered collection of elements. |

|

The interface that a type must implement to be used in a |

1.8.2. What’s the diff of BlockingCollection<T> and Channel<T> ?

* BlockingCollection<T> and Channel<T> are both useful for producer/consumer scenarios where one thread or task is producing data and another thread or task is consuming that data. However, their implementation and features are quite different, and they are designed to handle different use-cases.

BlockingCollection<T> is part of the System.Collections.Concurrent namespace and was introduced in .NET Framework 4.0. It provides a thread-safe, blocking and bounded collection that can be used with multiple producers and consumers.

Benefits of BlockingCollection<T>:

-

It simplifies thread communication, as it blocks and waits when trying to add to a full collection or take from an empty one.

-

It provides

AddandTakemethods for managing the collection, which if bounded, will block if the collection is full or empty, respectively. -

It implements

IEnumerable<T>, allowing easy enumeration of the items in the collection. -

It has built-in functionality for creating a complete producer/consumer on top of any

IProducerConsumerCollection<T>.

Channel<T> is part of the System.Threading.Channels namespace and was introduced in .NET Core 3.0. It’s newer and designed for the modern .NET threading infrastructure using async and await design patterns. [8]

Benefits of Channel<T>:

-

It supports the async programming model and can be used with

asyncandawaitkeywords in C#. -

It is designed for scenarios where you have asynchronous data streams that need to be processed.

-

It provides both synchronous and asynchronous methods for adding (

Writer.TryWrite,Writer.WriteAsync) and receiving (Reader.TryRead,Reader.ReadAsync) data. -

It supports back pressure by naturally making the producer wait if the channel is full.

-

It allows for creating unbounded or bounded channels via

Channel.CreateUnbounded<T>andChannel.CreateBounded<T>.

In general, Channel<T> is more modern and better integrated with async programming model. Therefore, for newer applications it is recommended to use the Channel<T> class.

However, if you have a legacy application where you cannot use async and await extensively, or where you are using ThreadPool and Tasks heavily, then BlockingCollection<T> might be a better choice.

1.9. Windows Presentation Foundation (WPF): Threading model

Typically, WPF applications start with two threads: one for handling rendering and another for managing the UI. The rendering thread effectively runs hidden in the background while the UI thread receives input, handles events, paints the screen, and runs application code. Most applications use a single UI thread, although in some situations it is best to use several. [11]

The UI thread queues work items inside an object called a Dispatcher. The Dispatcher selects work items on a priority basis and runs each one to completion. Every UI thread must have at least one Dispatcher, and each Dispatcher can execute work items in exactly one thread.

The trick to building responsive, user-friendly applications is to maximize the Dispatcher throughput by keeping the work items small. This way items never get stale sitting in the Dispatcher queue waiting for processing. Any perceivable delay between input and response can frustrate a user.

How then are WPF applications supposed to handle big operations? What if your code involves a large calculation or needs to query a database on some remote server? Usually, the answer is to handle the big operation in a separate thread, leaving the UI thread free to tend to items in the Dispatcher queue. When the big operation is complete, it can report its result back to the UI thread for display.

If only one thread can modify the UI, how do background threads interact with the user? A background thread can ask the UI thread to perform an operation on its behalf. It does this by registering a work item with the Dispatcher of the UI thread. The Dispatcher class provides the methods for registering work items: Dispatcher.InvokeAsync, Dispatcher.BeginInvoke, and Dispatcher.Invoke. These methods schedule a delegate for execution. Invoke is a synchronous call – that is, it doesn’t return until the UI thread actually finishes executing the delegate. InvokeAsync and BeginInvoke are asynchronous and return immediately.

1.10. The volatile keyword

The volatile keyword indicates that a field might be modified by multiple threads that are executing at the same time. The compiler, the runtime system, and even hardware may rearrange reads and writes to memory locations for performance reasons. Fields that are declared volatile are excluded from certain kinds of optimizations. There is no guarantee of a single total ordering of volatile writes as seen from all threads of execution. [9]

| On a multiprocessor system, a volatile read operation does not guarantee to obtain the latest value written to that memory location by any processor. Similarly, a volatile write operation does not guarantee that the value written would be immediately visible to other processors. |

The volatile keyword can be applied to fields of these types:

-

Reference types.

-

Pointer types (in an unsafe context). Note that although the pointer itself can be volatile, the object that it points to cannot. In other words, you cannot declare a "pointer to volatile."

-

Simple types such as sbyte, byte, short, ushort, int, uint, char, float, and bool.

-

An enum type with one of the following base types: byte, sbyte, short, ushort, int, or uint.

-

Generic type parameters known to be reference types.

-

IntPtr and UIntPtr.

Other types, including double and long, cannot be marked volatile because reads and writes to fields of those types cannot be guaranteed to be atomic. To protect multi-threaded access to those types of fields, use the Interlocked class members or protect access using the lock statement.

The volatile keyword can only be applied to fields of a class or struct. Local variables cannot be declared volatile.

2. Asynchronous programming patterns

.NET provides three patterns for performing asynchronous operations:

-

Task-based Asynchronous Pattern (TAP), which uses a single method to represent the initiation and completion of an asynchronous operation. TAP was introduced in .NET Framework 4. It’s the recommended approach to asynchronous programming in .NET. The

asyncandawaitkeywords in C# and theAsyncandAwaitoperators in Visual Basic add language support for TAP. -

Event-based Asynchronous Pattern (EAP), which is the event-based legacy model for providing asynchronous behavior. It requires a method that has theAsyncsuffix and one or more events, event handler delegate types, and EventArg-derived types. EAP was introduced in .NET Framework 2.0. It’s no longer recommended for new development. -

Asynchronous Programming Model (APM)pattern (also called the IAsyncResult pattern), which is the legacy model that uses theIAsyncResultinterface to provide asynchronous behavior. In this pattern, asynchronous operations requireBeginandEndmethods (for example,BeginWriteandEndWriteto implement an asynchronous write operation). This pattern is no longer recommended for new development.

3. Lazy Initialization

Lazy initialization of an object means that its creation is deferred until it is first used. (For this topic, the terms lazy initialization and lazy instantiation are synonymous.) Lazy initialization is primarily used to improve performance, avoid wasteful computation, and reduce program memory requirements. [12]

Although you can write your own code to perform lazy initialization, we recommend that you use Lazy<T> instead. Lazy<T> and its related types also support thread-safety and provide a consistent exception propagation policy.

| Type | Description |

|---|---|

A wrapper class that provides lazy initialization semantics for any class library or user-defined type. |

|

Resembles |

|

Provides advanced static (Shared in Visual Basic) methods for lazy initialization of objects without the overhead of a class. |

4. Parallel programming in .NET

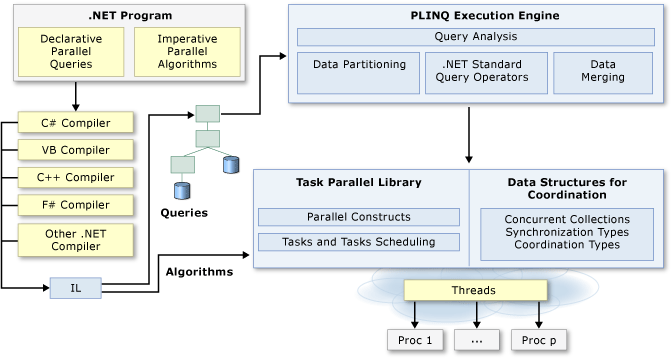

Many personal computers and workstations have multiple CPU cores that enable multiple threads to be executed simultaneously. To take advantage of the hardware, you can parallelize your code to distribute work across multiple processors. [13]

In the past, parallelization required low-level manipulation of threads and locks. Visual Studio and .NET enhance support for parallel programming by providing a runtime, class library types, and diagnostic tools. These features, which were introduced in .NET Framework 4, simplify parallel development. You can write efficient, fine-grained, and scalable parallel code in a natural idiom without having to work directly with threads or the thread pool.

The following illustration provides a high-level overview of the parallel programming architecture in .NET.

4.1. Task Parallel Library (TPL)

The Task Parallel Library (TPL) is a set of public types and APIs in the System.Threading and System.Threading.Tasks namespaces. The purpose of the TPL is to make developers more productive by simplifying the process of adding parallelism and concurrency to applications. The TPL dynamically scales the degree of concurrency to use all the available processors most efficiently. In addition, the TPL handles the partitioning of the work, the scheduling of threads on the ThreadPool, cancellation support, state management, and other low-level details. By using TPL, you can maximize the performance of your code while focusing on the work that your program is designed to accomplish.

4.2. Data Parallelism (Task Parallel Library)

Data parallelism refers to scenarios in which the same operation is performed concurrently (that is, in parallel) on elements in a source collection or array. In data parallel operations, the source collection is partitioned so that multiple threads can operate on different segments concurrently. [14]

The Task Parallel Library (TPL) supports data parallelism through the System.Threading.Tasks.Parallel class. This class provides method-based parallel implementations of for and foreach loops (For and For Each in Visual Basic). You write the loop logic for a Parallel.For or Parallel.ForEach loop much as you would write a sequential loop. You do not have to create threads or queue work items. In basic loops, you do not have to take locks. The TPL handles all the low-level work for you.

string path = Path.Combine(

Environment.GetFolderPath(Environment.SpecialFolder.UserProfile), ".nuget/packages/");

string[] fileNames = Directory.GetFiles(path, "*", SearchOption.AllDirectories);

Stopwatch sw = Stopwatch.StartNew();

for (int i = 0; i < 2; i++)

{

sw.Restart();

long parallelTotalSize = 0;

Parallel.ForEach(fileNames,

fileName => Interlocked.Add(ref parallelTotalSize, new FileInfo(fileName).Length));

Console.WriteLine($"Parallel: {parallelTotalSize}, {sw.ElapsedMilliseconds}ms");

sw.Restart();

long totalSize = 0;

foreach (string fileName in fileNames) totalSize += new FileInfo(fileName).Length;

Console.WriteLine($"Sequential : {totalSize}, {sw.ElapsedMilliseconds}ms");

}

// $ dotnet run

// Parallel: 2743226084, 400ms

// Sequential : 2743226084, 598ms

// Parallel: 2743226084, 220ms

// Sequential : 2743226084, 429ms4.3. Dataflow (Task Parallel Library)

The Task Parallel Library (TPL) provides dataflow components to help increase the robustness of concurrency-enabled applications. These dataflow components are collectively referred to as the TPL Dataflow Library. This dataflow model promotes actor-based programming by providing in-process message passing for coarse-grained dataflow and pipelining tasks. The dataflow components build on the types and scheduling infrastructure of the TPL and integrate with the C#, Visual Basic, and F# language support for asynchronous programming. These dataflow components are useful when you have multiple operations that must communicate with one another asynchronously or when you want to process data as it becomes available. Dataflow (Task Parallel Library)

The TPL Dataflow Library provides a foundation for message passing and parallelizing CPU-intensive and I/O-intensive applications that have high throughput and low latency. Because the runtime manages dependencies between data, you can often avoid the requirement to synchronize access to shared data. In addition, because the runtime schedules work based on the asynchronous arrival of data, dataflow can improve responsiveness and throughput by efficiently managing the underlying threads.

The TPL Dataflow Library consists of dataflow blocks, which are data structures that buffer and process data. The TPL defines three kinds of dataflow blocks: source blocks, target blocks, and propagator blocks.

-

A source block acts as a source of data and can be read from.

-

A target block acts as a receiver of data and can be written to.

-

A propagator block acts as both a source block and a target block, and can be read from and written to.

4.4. Task-based asynchronous programming

The Task Parallel Library (TPL) is based on the concept of a task, which represents an asynchronous operation. In some ways, a task resembles a thread or ThreadPool work item but at a higher level of abstraction. The term task parallelism refers to one or more independent tasks running concurrently. Tasks provide two primary benefits: [15]

-

More efficient and more scalable use of system resources.

Behind the scenes, tasks are queued to the ThreadPool, which has been enhanced with algorithms that determine and adjust to the number of threads. These algorithms provide load balancing to maximize throughput. This process makes tasks relatively lightweight, and you can create many of them to enable fine-grained parallelism.

-

More programmatic control than is possible with a thread or work item.

Tasks and the framework built around them provide a rich set of APIs that support waiting, cancellation, continuations, robust exception handling, detailed status, custom scheduling, and more.

For both reasons, TPL is the preferred API for writing multi-threaded, asynchronous, and parallel code in .NET.

4.5. Parallel LINQ (PLINQ)

Language-Integrated Query (LINQ) is the name for a set of technologies based on the integration of query capabilities directly into the C# language.

Traditionally, queries against data are expressed as simple strings without type checking at compile time or IntelliSense support. Furthermore, you have to learn a different query language for each type of data source: SQL databases, XML documents, various Web services, and so on.

With LINQ, a query is a first-class language construct, just like classes, methods, and events. [19]

-

In-memory data

There are two ways you enable LINQ querying of in-memory data. If the data is of a type that implements

IEnumerable<T>, you query the data by using LINQ to Objects. If it doesn’t make sense to enable enumeration by implementing theIEnumerable<T>interface, you define LINQ standard query operator methods, either in that type or as extension methods for that type. Custom implementations of the standard query operators should use deferred execution to return the results. -

Remote data

The best option for enabling LINQ querying of a remote data source is to implement the

IQueryable<T>interface.

|

At compile time, query expressions are converted to standard query operator method calls according to the rules defined in the C# specification. Any query that can be expressed by using query syntax can also be expressed by using method syntax. In some cases, query syntax is more readable and concise. In others, method syntax is more readable. There’s no semantic or performance difference between the two different forms. |

Parallel LINQ (PLINQ) is a parallel implementation of the Language-Integrated Query (LINQ) pattern. PLINQ implements the full set of LINQ standard query operators as extension methods for the System.Linq namespace and has additional operators for parallel operations. PLINQ combines the simplicity and readability of LINQ syntax with the power of parallel programming. [20]

A PLINQ query in many ways resembles a non-parallel LINQ to Objects query. PLINQ queries, just like sequential LINQ queries, operate on any in-memory IEnumerable or IEnumerable<T> data source, and have deferred execution, which means they do not begin executing until the query is enumerated. The primary difference is that PLINQ attempts to make full use of all the processors on the system. It does this by partitioning the data source into segments, and then executing the query on each segment on separate worker threads in parallel on multiple processors. In many cases, parallel execution means that the query runs significantly faster.

The System.Linq.ParallelEnumerable class exposes almost all of PLINQ’s functionality, includes implementations of all the standard query operators that LINQ to Objects supports, although it does not attempt to parallelize each one.

In addition to the standard query operators, the ParallelEnumerable class contains a set of methods that enable behaviors specific to parallel execution. These PLINQ-specific methods are listed in the following table.

ParallelEnumerable Operator |

Description |

|

The entry point for PLINQ. Specifies that the rest of the query should be parallelized, if it is possible. |

|

Specifies that the rest of the query should be run sequentially, as a non-parallel LINQ query. |

|

Specifies that PLINQ should preserve the ordering of the source sequence for the rest of the query, or until the ordering is changed, for example by the use of an orderby (Order By in Visual Basic) clause. |

|

Specifies that PLINQ for the rest of the query is not required to preserve the ordering of the source sequence. |

|

Specifies that PLINQ should periodically monitor the state of the provided cancellation token and cancel execution if it is requested. |

|

Specifies the maximum number of processors that PLINQ should use to parallelize the query. |

|

Provides a hint about how PLINQ should, if it is possible, merge parallel results back into just one sequence on the consuming thread. |

|

Specifies whether PLINQ should parallelize the query even when the default behavior would be to run it sequentially. |

|

A multithreaded enumeration method that, unlike iterating over the results of the query, enables results to be processed in parallel without first merging back to the consumer thread. |

|

An overload that is unique to PLINQ and enables intermediate aggregation over thread-local partitions, plus a final aggregation function to combine the results of all partitions. |

IEnumerable<string> files = Directory.EnumerateFiles("/usr/share/man", "*.gz", SearchOption.AllDirectories);

Stopwatch sw = Stopwatch.StartNew();

for (int i = 0; i < 2; i++)

{

sw.Restart();

var parallelLetters = files.AsParallel()

.Select(SplitLetters)

.SelectMany(w => w)

.GroupBy(char.ToLower)

.OrderByDescending(g => g.Count())

.First();

Console.WriteLine($"Parallel: {parallelLetters.Key}: {parallelLetters.Count()}, {sw.ElapsedMilliseconds}ms");

sw.Restart();

var sequentialLetters = files // .AsParallel().AsSequential()

.Select(SplitLetters)

.SelectMany(w => w)

.GroupBy(char.ToLower)

.OrderByDescending(g => g.Count())

.First();

Console.WriteLine($"Sequential: {sequentialLetters.Key}: {sequentialLetters.Count()}, {sw.ElapsedMilliseconds}ms");

}

static IEnumerable<char> SplitLetters(string fileName)

{

using StreamReader reader = new StreamReader(fileName);

string? line;

while ((line = reader.ReadLine()) != null)

{

foreach (char c in line.ToCharArray())

{

if (char.IsLetter(c))

yield return c;

}

}

}

// $ dotnet run

// Parallel: e: 251378, 2242ms

// Sequential: e: 251378, 1996ms

// Parallel: e: 251378, 1133ms

// Sequential: e: 251378, 1824ms5. Asynchronous programming with async and await

You can avoid performance bottlenecks and enhance the overall responsiveness of your application by using asynchronous programming. However, traditional techniques for writing asynchronous applications can be complicated, making them difficult to write, debug, and maintain.

C# supports simplified approach, async programming, that leverages asynchronous support in the .NET runtime. The compiler does the difficult work that the developer used to do, and your application retains a logical structure that resembles synchronous code. As a result, you get all the advantages of asynchronous programming with a fraction of the effort. [16]

5.1. Async improves responsiveness

Asynchrony is essential for activities that are potentially blocking, such as web access. Access to a web resource sometimes is slow or delayed. If such an activity is blocked in a synchronous process, the entire application must wait. In an asynchronous process, the application can continue with other work that doesn’t depend on the web resource until the potentially blocking task finishes.

Asynchrony proves especially valuable for applications that access the UI thread because all UI-related activity usually shares one thread. If any process is blocked in a synchronous application, all are blocked. Your application stops responding, and you might conclude that it has failed when instead it’s just waiting.

When you use asynchronous methods, the application continues to respond to the UI. You can resize or minimize a window, for example, or you can close the application if you don’t want to wait for it to finish.

The async-based approach adds the equivalent of an automatic transmission to the list of options that you can choose from when designing asynchronous operations. That is, you get all the benefits of traditional asynchronous programming but with much less effort from the developer.

5.2. Threads

Async methods are intended to be non-blocking operations. An await expression in an async method doesn’t block the current thread while the awaited task is running. Instead, the expression signs up the rest of the method as a continuation and returns control to the caller of the async method.

The async and await keywords don’t cause additional threads to be created. Async methods don’t require multithreading because an async method doesn’t run on its own thread. The method runs on the current synchronization context and uses time on the thread only when the method is active. You can use Task.Run to move CPU-bound work to a background thread, but a background thread doesn’t help with a process that’s just waiting for results to become available.

5.3. async and await

If you specify that a method is an async method by using the async modifier, you enable the following two capabilities.

-

The marked async method can use

awaitto designate suspension points. The await operator tells the compiler that the async method can’t continue past that point until the awaited asynchronous process is complete. In the meantime, control returns to the caller of the async method. -

The suspension of an async method at an await expression doesn’t constitute an exit from the method, and finally blocks don’t run.

-

The marked async method can itself be awaited by methods that call it.

An async method typically contains one or more occurrences of an await operator, but the absence of await expressions doesn’t cause a compiler error. If an async method doesn’t use an await operator to mark a suspension point, the method executes as a synchronous method does, despite the async modifier. The compiler issues a warning for such methods.

5.4. SynchronizationContext and ConfigureAwait

| Don’t Need ConfigureAwait(false), But Still Use It in Libraries. [22] |

SynchronizationContext was also introduced in .NET Framework 2.0, as an abstraction for a general scheduler. In particular, SynchronizationContext’s most used method is Post, which queues a work item to whatever scheduler is represented by that context. [17]

Consider a UI framework like Windows Forms. As with most UI frameworks on Windows, controls are associated with a particular thread, and that thread runs a message pump which runs work that’s able to interact with those controls: only that thread should try to manipulate those controls, and any other thread that wants to interact with the controls should do so by sending a message to be consumed by the UI thread’s pump. Windows Forms makes this easy with methods like Control.BeginInvoke, which queues the supplied delegate and arguments to be run by whatever thread is associated with that Control. You can thus write code like this:

private void button1_Click(object sender, EventArgs e)

{

ThreadPool.QueueUserWorkItem(_ =>

{

string message = ComputeMessage();

button1.BeginInvoke(() =>

{

button1.Text = message;

});

});

}That will offload the ComputeMessage() work to be done on a ThreadPool thread (so as to keep the UI responsive while it’s being processed), and then when that work has completed, queue a delegate back to the thread associated with button1 to update button1’s label. Easy enough. WPF has something similar, just with its Dispatcher type:

private void button1_Click(object sender, RoutedEventArgs e)

{

ThreadPool.QueueUserWorkItem(_ =>

{

string message = ComputeMessage();

button1.Dispatcher.InvokeAsync(() =>

{

button1.Content = message;

});

});

}Each application model then ensures it’s published as SynchronizationContext.Current a SynchronizationContext-derived type that does the "right thing." For example, Windows Forms has this:

public sealed class WindowsFormsSynchronizationContext : SynchronizationContext, IDisposable

{

public override void Post(SendOrPostCallback d, object? state) =>

_controlToSendTo?.BeginInvoke(d, new object?[] { state });

...

}and WPF has this:

public sealed class DispatcherSynchronizationContext : SynchronizationContext

{

public override void Post(SendOrPostCallback d, Object state) =>

_dispatcher.BeginInvoke(_priority, d, state);

...

}SynchronizationContext makes it possible to call reusable helpers and automatically be scheduled back whenever and to wherever the calling environment deems fit. As a result, it’s natural to expect that to "just work" with async/await, and it does.

button1.Text = await Task.Run(() => ComputeMessage());That invocation of ComputeMessage is offloaded to the thread pool, and upon the method’s completion, execution transitions back to the UI thread associated with the button, and the setting of its Text property happens on that thread.

That integration with SynchronizationContext is left up to the awaiter implementation (the code generated for the state machine knows nothing about SynchronizationContext), as it’s the awaiter that is responsible for actually invoking or queueing the supplied continuation when the represented asynchronous operation completes. While a custom awaiter need not respect SynchronizationContext.Current, the awaiters for Task, Task<TResult>, ValueTask, and ValueTask<TResult> all do. That means that, by default, when you await a Task, a Task<TResult>, a ValueTask, a ValueTask<TResult>, or even the result of a Task.Yield() call, the awaiter by default will look up the current SynchronizationContext and then if it successfully got a non-default one, will eventually queue the continuation to that context.

The ConfigureAwait method isn’t special: it’s not recognized in any special way by the compiler or by the runtime. It is simply a method that returns a struct (a ConfiguredTaskAwaitable) that wraps the original task it was called on as well as the specified Boolean value. Remember that await can be used with any type that exposes the right pattern. By returning a different type, it means that when the compiler accesses the instances GetAwaiter method (part of the pattern), it’s doing so off of the type returned from ConfigureAwait rather than off of the task directly, and that provides a hook to change the behavior of how the await behaves via this custom awaiter. ConfigureAwait(continueOnCapturedContext: false) is used to avoid forcing the callback to be invoked on the original context or scheduler. [18]

Appendix A: FAQ

A.1. What happens on Thread.Sleep(0) in .NET?

|

> What happens on Thread.Sleep(0) in .NET? * In .NET, It’s effectively a way to signal to the operating system that the thread is willing to give up its slice of processor time, if there are other threads that are ready to run on the same processor. However, This can be useful to prevent a thread from consuming too much CPU time in a busy-wait scenario or when you might want to give other threads the chance to run. Remember that using |

A.2. What are the worker and completion port threads?

//

// Summary:

// Sets the number of requests to the thread pool that can be active concurrently.

// All requests above that number remain queued until thread pool threads become

// available.

//

// Parameters:

// workerThreads:

// The maximum number of worker threads in the thread pool.

//

// completionPortThreads:

// The maximum number of asynchronous I/O threads in the thread pool.

//

// Returns:

// true if the change is successful; otherwise, false.

public static bool SetMaxThreads(int workerThreads, int completionPortThreads);

public static bool SetMinThreads(int workerThreads, int completionPortThreads);|

> What are the worker and completion port threads in SetMaxThreads(int workerThreads, int completionPortThreads)? * The

The threadpool maintains two types of threads, worker threads for the most part handle compute operations and I/O threads handle as the name suggests handle I/O bound operations. Asynchronous I/O operations, which are operations that complete at some later point, often use callback methods to signal completion. When the system calls these callback methods, it does so using a thread from the ThreadPool. The It’s typically not necessary to change the ThreadPool size, because the ThreadPool already optimizes the number of threads it uses based on the number of CPUs and the types of tasks it’s running. In most cases, ThreadPool is efficient in managing its threads. Its algorithm aims to efficiently use all available CPU resources while not overloading the system with too many threads at the same time. It tries to maintain a balance. |

A.3. How does .NET identify I/O-bound or compute-bound operations?

|

> How does .NET identify I/O-bound or compute-bound operations? * The .NET runtime itself doesn’t actively categorize tasks as I/O-bound or compute-bound. It’s up to developers to ensure that appropriate APIs and techniques are used for I/O-bound and compute-bound tasks. For I/O-bound operations, which typically include file, database, and network operations, you should use asynchronous methods when they’re available to avoid blocking (or tying up) a thread from the thread pool. When an I/O-bound operation is asynchronous, this generally means the operation is offloaded to the operating system, freeing up the .NET runtime thread to perform other work. I/O bound means the data is transferred between the CPU and the peripheral devices (disk, network, etc.), during which the CPU isn’t doing much work. On the other hand, when a task is compute-bound, meaning it involves heavy computations and keeps the processor busy, developers should generally use synchronous methods and potentially multiple threads to utilize more cores and finish the computations faster. This kind of operation is typically done in parallel to split the work across multiple threads, where each thread uses synchronous code to do its part of the work. So, while .NET doesn’t automatically distinguish between I/O-bound and compute-bound operations, it provides facilities (like asynchronous methods for I/O-bound tasks and parallel processing techniques such as the TPL for compute-bound tasks) for developers to write efficient code for both types of operations. |

|

> How to understand 'It’s up to developers…'? * What "It’s up to developers…" means is that the .NET runtime, or indeed any other runtime, cannot automatically discern if the operation you’re performing is I/O-bound or compute-bound. It’s the responsibility of the developer writing the code to understand what kind of operation they’re performing and to use the correct method to handle it. For example,

The appropriate programming approach (asynchronous or multithreaded) is dictated by the nature of the operation. The developer must know their application, the tasks, and their nature, and then use the appropriate method. The .NET runtime will not do this discernment automatically. |

A.4. How does CLR manage the number of threads (worker and I/O threads) in the ThreadPool?

|

> How does CLR manage the number of threads (worker and I/O threads) in the ThreadPool? * The CLR, or Common Language Runtime, uses a set algorithm to manage the number of threads in its ThreadPool. Upon application startup, the ThreadPool possesses few threads. As requests come in, threads are added to fulfill those requests. The CLR considers several factors to make an adjustment, including the workload demand, number of processors, and the number of existing threads. For each pending task or work item, a check is performed to see if a thread is available. If not, and the current thread count is below the maximum limit, a new thread is created unless we’ve reached the maximum limit "rate". If a ThreadPool thread is idle for some time (around a minute), it gets terminated, which reduces resource usage. If the thread count reaches the max limit and the requests are still not fulfilled, they are queued. "Worker" threads are commonly used for executing synchronous and asynchronous managed code, and "I/O threads" are specifically designated for completing I/O-bound work. Both the maximum threads and "rate" are automatically set based on several factors like the size of virtual memory, number of processors available, etc. The thread injection rate (how often new threads are added) aids to prevent situations where rapidly creating too many threads would cause resource saturation and decrease performance. You can manually get and change the minimum and maximum number of threads in the ThreadPool by using However, it’s recommended to let the CLR manage the threading details because of its smart heuristics and tuning based on extensive research and testing. Custom changes should only be made after careful testing and analysis. |

A.5. What’s the algorithm of the thread pool in .NET?

|

> What’s the algorithm of the thread pool in .NET? * According to .NET’s official documentation, the CLR employs a hill-climbing algorithm for adjusting the number of ThreadPool threads that can run simultaneously. This heuristic algorithm is designed specifically to find the most efficient and effective number of threads for any given workload. Here’s how the algorithm works:

This algorithm works to maintain a balance between the number of threads and the amount of work that needs to be done. By ensuring threads are only created as needed, and cleaning up idle threads, it helps to optimize the system resources and performance. It’s important to note that the .NET ThreadPool’s algorithm is intentionally not publicly documented in detail because it’s subject to change as Microsoft fine-tunes it based on new findings and requirements. As always, it’s recommended to let the CLR manage the ThreadPool for you, unless you have a specific reason and have done careful performance analysis. |

A.6. What if Interlocked.Increment a 64-bit integer on a 32-bit hardware?

|

> About the Interlocked class in .NET, what if we increment a 64-bit integer on a 32-bit hardware? * The In a multithreaded or parallel computing scenario, multiple threads might be trying to increment the value of a shared 64-bit integer simultaneously. In such a case, However, performing atomic operations on a 64-bit integer (long/Int64) in 32-bit hardware requires two CPU instructions, because the size of the long value is greater than the word size of the 32-bit processor. But even then, .NET runtime ensures via Still, it’s important to note that such atomic operations come with a slightly increased performance overhead as compared to non-atomic operations, due to preventing reordering and caching of the value being incremented. Thus, they must be used judiciously. |

A.7. How does .NET make the multiple CPU instructions as an atomic?

|

> How does CLR make the two CPU instructions as an atomic? * The .NET Common Language Runtime (CLR) and the underlying operating system provide certain guarantees to ensure atomicity of operations on 64-bit values, even on 32-bit platforms. The On Windows, for example, this can be achieved using a combination of OS-provided synchronization primitives and specific processor instructions. For instance, the When you call an These atomic operations prevent any thread interference or inconsistencies while performing read-modify-write actions by ensuring that the operation is completed as a single, uninterruptible unit. |

A.8. I heard there are some risk on atomic operations in Go or sth else?

|

> I heard there are some risk on atomic operations in Go or sth else? * Yes, coding with atomic operations can indeed be risky, not just in Go but in any language, if they are misused or misunderstood. Here are a few reasons why this is the case:

In Go, for example, the So while atomic operations are a powerful tool in concurrent programming, they are best suited for a limited set of problems and should be used judiciously and cautiously, usually when performance is a dicerning factor and you’re comfortable and proficient with the complexities of concurrent system behaviour and memory models. |

A.9. What’s ABA problems?

|